Chatbot quality assurance is the practice of systematically testing and evaluating a chatbot's accuracy, conversation flow, and user experience before and after deployment. With AI now handling up to 80% of routine customer queries, QA separates bots that resolve issues from bots that create them. Skipping it means broken conversations, lost customers, and wasted automation investment.

What Is Chatbot Quality Assurance?

Chatbot quality assurance (QA) is a structured evaluation process that tests a chatbot's intent recognition, response accuracy, conversation flow, escalation logic, and data handling against defined performance benchmarks. It differs from standard software QA by focusing on natural language variability and conversational context rather than deterministic inputs and outputs.

Chatbot QA covers every test and review cycle that confirms your bot actually does what it's supposed to do. That includes verifying intent classification, checking whether the bot retains context across multi-turn conversations, confirming fallback behavior when it doesn't know an answer, and validating that handoff to human agents works without dropping the thread.

Standard software testing follows predictable paths: input X should produce output Y. Chatbot testing can't work that way because users phrase the same question hundreds of different ways. "I want to cancel," "how do I stop my subscription," and "get rid of my plan" all mean the same thing, but a poorly trained bot treats them as three separate intents.

According to Fastbots.ai, Gartner predicts that 80% of routine customer interactions will be fully handled by AI in 2026. That projection makes QA non-optional. If your chatbot handles the majority of first-contact conversations, a 5% error rate doesn't mean a few annoyed users. It means hundreds of failed interactions per day at scale.

I've seen teams launch chatbots with zero QA beyond a quick demo conversation. Within two weeks, their support ticket volume actually increased because the bot was confidently giving wrong answers, and customers had to contact a human agent to undo the damage.

How Does Chatbot QA Work?

Chatbot quality assurance operates across three layers: pre-deployment testing, live monitoring, and periodic audits. Each layer catches different failure modes.

1. Pre-deployment testing runs before the bot goes live. You feed it a test suite of sample queries covering every intent the bot should recognize, plus edge cases it shouldn't try to handle. The goal is confirming that intent classification accuracy sits above your threshold (most teams target 85-95% depending on complexity) and that conversation flows complete without dead ends.

2. Live monitoring tracks the bot's real-world performance using metrics like containment rate, resolution rate, average handle time, and user satisfaction scores. You're watching for drift: the bot might perform well at launch but degrade as customers start asking questions your training data didn't anticipate. A good chatbot analytics setup flags these gaps automatically.

3. Periodic audits involve manually reviewing conversation transcripts. Automated metrics tell you something went wrong, but transcript reviews tell you why. We typically sample 50-100 conversations per week and tag them for accuracy, tone, escalation handling, and whether the bot followed its configured persona.

These three layers work together. Pre-deployment testing catches the obvious failures, live monitoring catches the slow degradation, and manual audits catch the subtle quality issues that metrics miss.

Why Does Chatbot QA Matter?

The business case for chatbot QA comes down to two forces pushing against each other: adoption is accelerating, but customer tolerance for bad bot experiences is dropping.

According to Azumo, businesses report $8 in returns for every $1 invested in chatbots. That ROI disappears fast when the bot misfires. According to a Forbes report, 30% of consumers switch to a competitor after a single negative chatbot experience.

Here's what QA directly protects:

• Revenue retention: A bot that mishandles billing questions or gives wrong product information pushes customers toward churn. QA catches these high-stakes failures before they cost you accounts.

• Support team efficiency: Without QA, broken bot conversations generate more work for human agents, not less. They inherit frustrated customers who already got a wrong answer and now need extra reassurance.

• Brand perception: Your chatbot is often the first interaction a prospect has with your company. A bot that can't understand basic questions signals that your product might be equally unreliable.

• Compliance exposure: Bots handling financial, healthcare, or personal data without QA validation create regulatory risk. A bot that accidentally surfaces another customer's information isn't just a bug. It's a potential GDPR or CCPA violation.

According to a Forrester analysis cited by Fastbots.ai, three in ten firms will actually damage their customer experience this year through poorly implemented AI self-service. QA is the difference between being in the 70% that benefit and the 30% that backfire.

What Are the Common Challenges in Chatbot QA?

Testing a chatbot isn't like testing a checkout flow. Conversation is unpredictable, and most QA challenges stem from that unpredictability.

Intent misclassification. Users don't speak in clean, well-formatted sentences. They use slang, typos, abbreviations, and sentence fragments. A bot trained on "I want to cancel my subscription" might completely miss "nvm cancel it" or "can u stop charging me." QA needs to include adversarial inputs that mimic real user behavior, not just polished test cases.

Context loss in multi-turn conversations. A user asks about pricing, then says "what about the enterprise plan," then follows up with "does it include SSO?" Each message depends on what came before. Bots that lose this thread force users to repeat themselves, and response quality drops significantly.

Training data gaps. Your bot only knows what you've trained it on. New product features, policy changes, seasonal promotions, and emerging customer issues all create knowledge gaps. QA must include regular checks against current business state, not just the data the bot launched with.

False confidence. This is the most dangerous failure mode. The bot gives a wrong answer with high confidence instead of admitting it doesn't know. Unlike a clear error, false confidence is hard to detect at scale because the conversation looks normal in metrics. Only transcript audits catch it reliably.

Escalation breakdowns. The handoff from bot to human should preserve the full conversation context. When it doesn't, the customer has to start over. Testing this end-to-end, including during peak hours, is something many teams skip because it requires coordinating with the support team.

Regression after updates. You fix one intent and break two others. Without regression testing, every improvement becomes a gamble. This is where automated test suites pay for themselves, and where structured chatbot testing practices prevent you from shipping regressions.

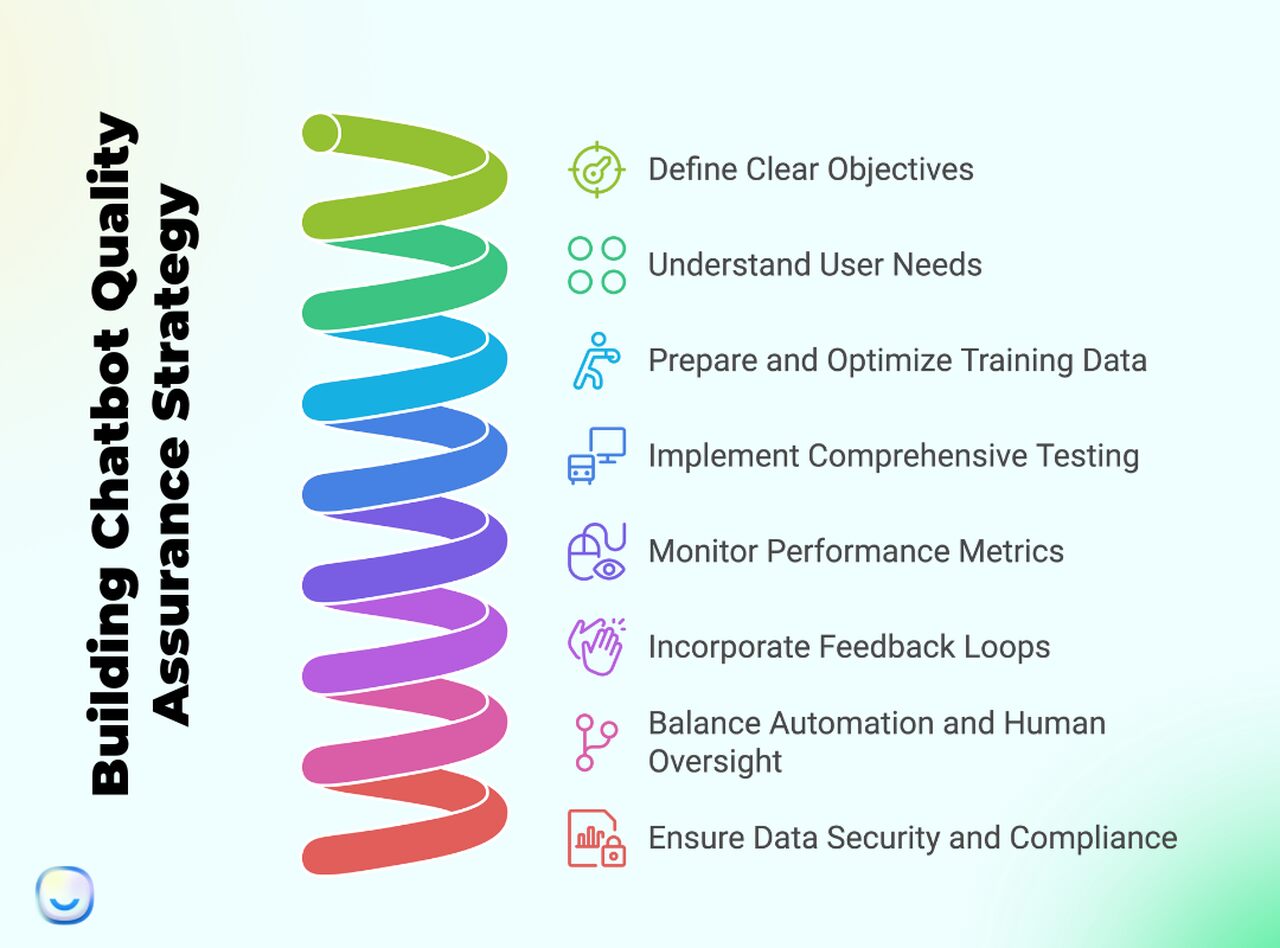

How to Build a Chatbot QA Strategy

A QA strategy turns ad-hoc testing into a repeatable process. Here's the framework we've seen work for support teams handling 500+ conversations per day.

Step 1: Set measurable quality benchmarks

Don't start with "make the bot better." Define what "better" means in numbers. Set targets for intent recognition accuracy, containment rate, average resolution time, and user satisfaction score. These become your pass/fail criteria for every QA cycle.

Step 2: Map your highest-risk conversation flows

Not every bot conversation carries equal weight. Billing disputes, account cancellations, and data access requests carry more risk than "what are your business hours." Prioritize QA effort on flows where a wrong answer has real consequences. Review your containment rate data to identify which topics fall through most often.

Step 3: Build a test suite that reflects real users

Pull actual customer messages from your conversation logs. Don't write synthetic test cases from scratch. Real messages include the typos, incomplete sentences, and unexpected phrasing that synthetic tests miss. Aim for 20-30 test cases per intent, including at least 5 adversarial variations.

Step 4: Automate regression testing

Every time you update training data or conversation logic, run your full test suite automatically. This catches regressions before they reach production. Most teams integrate this into their deployment pipeline so no update ships without passing the test suite.

Step 5: Monitor live performance daily

Set up dashboards tracking your key metrics in real time. Look for sudden drops in containment rate or spikes in negative feedback, which usually indicate a new failure pattern the bot hasn't been trained for. Pair this with customer success metrics to connect bot performance to business outcomes.

Step 6: Review transcripts weekly

Automated metrics catch quantity problems. Manual reviews catch quality problems. Schedule weekly transcript reviews where a QA analyst reads 50-100 conversations and tags issues. Pay special attention to conversations where the bot thought it resolved the issue but the customer contacted support again afterward.

Step 7: Close the feedback loop

Every issue found in monitoring or transcript review should flow back into training data updates and test suite expansion. If your QA process identifies problems but doesn't fix them, it's documentation, not quality assurance. Use chat surveys to capture what users think of the interaction directly.

Step 8: Test escalation paths end-to-end

Simulate a full escalation: bot conversation, handoff trigger, context transfer to human agent, and agent pickup. Test this during business hours and after hours. The most common failure isn't the handoff itself, it's context loss during transfer, which forces the customer to repeat everything.

Step 9: Validate data security handling

If your bot collects or displays personal information, QA must include security testing. Attempt to extract other users' data through prompt manipulation. Test whether the bot properly redacts sensitive information in logs. Verify GDPR/CCPA compliance by confirming that data deletion requests are actually processed.

Step 10: Schedule quarterly strategy reviews

Your QA strategy should evolve with your bot. Every quarter, review which intents are growing in volume, which new failure patterns have emerged, and whether your benchmarks still reflect business priorities. A strategy that was right six months ago may miss entirely new conversation categories today.

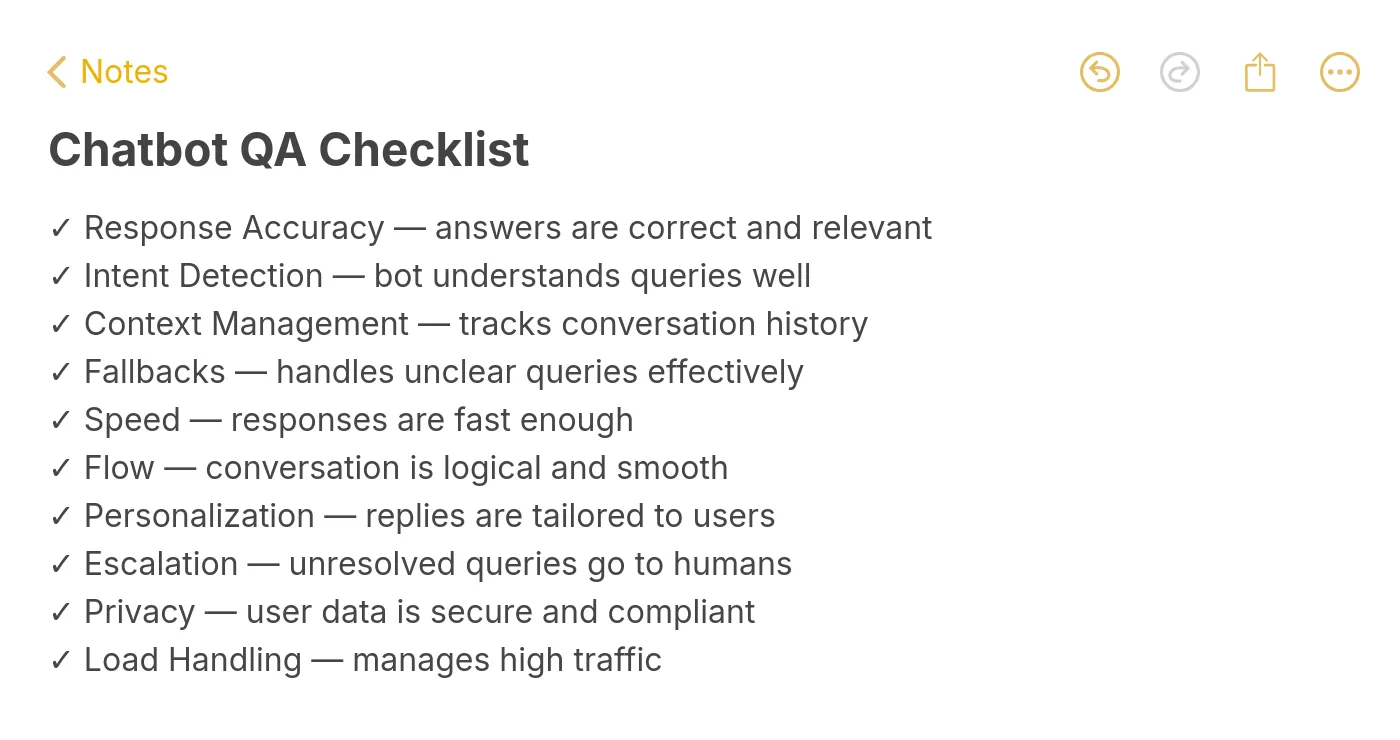

Chatbot QA Checklist

Use this as a quick-reference before any bot deployment or major update:

Types of Chatbot QA Testing

Different testing approaches catch different types of failures. Most production chatbots need all four.

Functional testing

Verifies that each intent, entity extraction, and conversation flow works as designed. This is your basic "does the bot do what we built it to do" test. Run it after every training data update. If your bot handles multiple languages, functional testing needs to cover each language independently since NLP performance varies significantly across languages.

Regression testing

Confirms that new changes don't break existing functionality. You maintain a growing library of test cases from previous QA cycles. When you add a new intent or modify an existing one, regression tests confirm everything else still works. This is the test most teams skip and most teams regret skipping.

Usability testing

Puts real users in front of the bot and observes how they interact with it. Metrics-based testing tells you whether intents fire correctly. Usability testing tells you whether users can actually accomplish their goals. There's a gap between those two things that only human observation reveals.

Performance and load testing

Simulates high-traffic conditions to verify the bot doesn't slow down or fail under load. This matters especially during product launches, marketing campaigns, or seasonal peaks when bot traffic can spike 3-5x above normal. A bot that works fine with 50 concurrent users might time out at 500.

Common Chatbot QA Mistakes to Avoid

• Testing only happy paths: If your test cases all use clean, well-formed questions, your QA process is testing a fantasy version of your user base. Real users misspell words, send incomplete messages, and change topics mid-conversation. At least 30% of your test suite should be adversarial inputs.

• Measuring containment rate without checking accuracy: A high containment rate means the bot handled conversations without escalating. It doesn't mean it handled them correctly. A bot that confidently gives wrong answers has a high containment rate and terrible quality. Always pair containment metrics with accuracy audits.

• Treating QA as a one-time event: Launching with a tested bot and never testing again is like passing a driving test and never checking your mirrors. User behavior changes, your product changes, and the bot's knowledge base ages. QA needs to run continuously, not just at launch.

• Ignoring the handoff experience: Teams test the bot in isolation but never test what happens when the bot transfers to a human. Context loss during escalation is one of the highest-impact failures because it frustrates customers at their most vulnerable moment, right when they've already failed to get help from the bot. Good chatbot design plans for this from the start.

How to Measure Chatbot QA Performance

Tracking the right metrics tells you whether your QA process actually works. Here are the five that matter most:

Intent recognition accuracy measures the percentage of user messages the bot classifies into the correct intent. Track this weekly and set a minimum threshold (90% is a good starting point for production bots). Any intent dropping below 80% needs immediate retraining.

Containment rate shows what percentage of conversations the bot resolves without human escalation. A healthy range sits between 60-80% depending on your industry. Below 50% means the bot isn't adding value. Above 90% might mean it's not escalating cases it should be.

Resolution accuracy checks whether contained conversations were actually resolved correctly. This requires transcript sampling since you can't automate it reliably. We pull a random 10% sample weekly and verify the bot's answers against the knowledge base.

Customer satisfaction score (CSAT) after bot conversations tells you the user's perspective. Compare bot CSAT to human agent CSAT. If the gap exceeds 15 points, your bot quality needs work. Use built-in feedback features to collect this data passively.

Time to resolution measures how long it takes from the first user message to a confirmed resolution. Faster isn't always better. A bot that resolves in 30 seconds but gets it wrong creates more work than one that takes 90 seconds and gets it right.

Getting Started with Chatbot QA

If you're running a chatbot without a QA process, start small. Pull your last 100 conversations and read through them. Tag each one as resolved correctly, resolved incorrectly, or escalated unnecessarily. That exercise alone will show you where your biggest quality gaps are.

From there, build a test suite targeting those gaps. Set up basic monitoring for containment rate and user satisfaction. Schedule a weekly 30-minute transcript review. You don't need a complex QA operation on day one. You need a habit of checking whether your bot actually works.

If you want a chatbot that sounds human and resolves issues accurately, QA is the work that makes that possible. No amount of NLP sophistication replaces the discipline of testing what your bot says before your customers hear it.

Frequently Asked Questions

What is the difference between QA and chatbot testing?

Chatbot testing is a subset of chatbot QA. Testing focuses on verifying specific functionality: does intent X fire correctly, does conversation flow Y reach its endpoint. QA is the broader process that includes testing, monitoring, transcript review, feedback analysis, and continuous improvement. You can test a chatbot without doing QA, but you can't do QA without testing.

How do you perform quality assurance on a chatbot?

Start by defining measurable benchmarks for accuracy, containment, and satisfaction. Build a test suite from real user messages, not synthetic examples. Run those tests before every deployment. Monitor live performance metrics daily. Review conversation transcripts weekly. Feed findings back into training data. The cycle never stops because your bot's environment constantly changes.

Why is chatbot QA important for businesses?

Because chatbots are increasingly the first point of contact with your customers. According to Jotform, 70% of CX leaders have restructured their customer experience around AI-driven interactions. Without QA, you're trusting that restructuring to an untested system. The cost of bad bot experiences compounds: lost customers, increased support load, and brand damage that takes months to repair.

What tools are commonly used for chatbot QA?

The tool stack typically includes an analytics dashboard for real-time monitoring (tracking containment rate, response time, and satisfaction scores), an automated testing framework for regression testing against your test suite, a conversation review tool for manual transcript audits, and a sentiment analysis tool for detecting user frustration patterns that raw metrics miss.

How often should you run chatbot QA?

Automated regression tests should run with every deployment. Live metric monitoring should run continuously. Manual transcript reviews should happen weekly. Full strategy reviews should happen quarterly. The cadence depends on conversation volume: a bot handling 10,000 conversations per day needs more frequent audits than one handling 100.