A robotic chatbot still feels like the worst kind of phone tree, only faster. The economics tell the same story as the experience: AI now powers 68% of the global chatbot market, up from 40% in 2023, and customer satisfaction with chatbots has climbed to 72-78% — but only when the bot actually sounds like a person who wants to help. The gap between a humanized assistant and a script reader is the difference between a 4-minute resolution and a 9-message escalation. This guide walks through six ways to close it.

To make a chatbot sound more human, give it one consistent persona, write empathy into every fallback, vary sentence length so it stops sounding like a form letter, adapt to the user's mood, add light personalization where it earns its keep, and train it on real customer conversations instead of generic intent libraries. Persona, empathy, and language variety do most of the work.

A human-sounding chatbot is one that holds a single, recognizable persona, mirrors the user's emotional register, varies its phrasing, and resolves intent the way a competent support rep would — without breaking character at the first edge case.

Why Should Chatbots Sound Human in 2026?

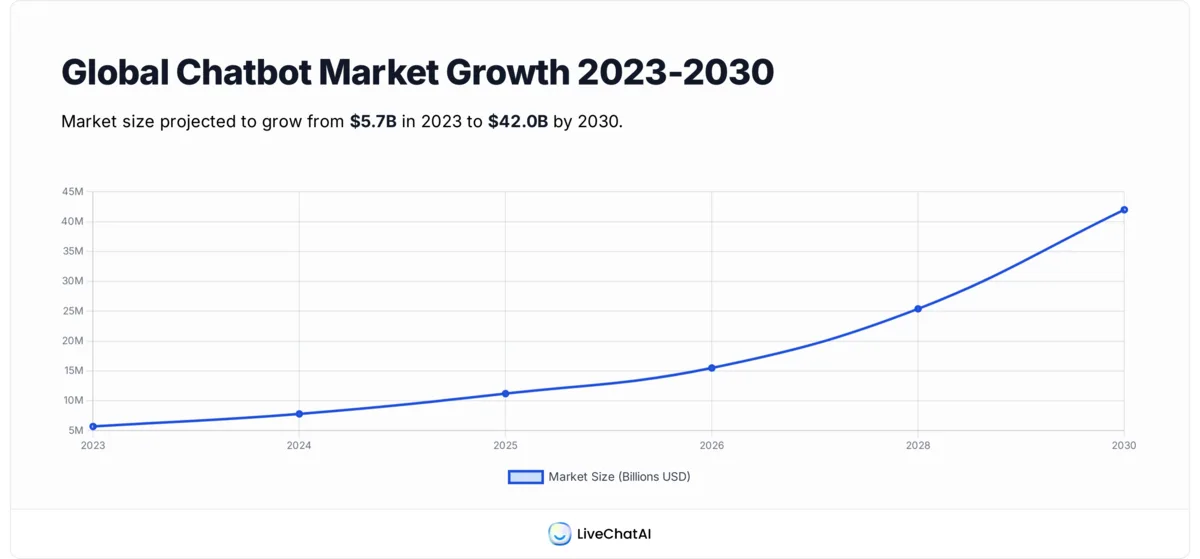

The short answer is money and patience. The global chatbot market is worth $15.5 billion in 2026, up from $5.7 billion in 2023, and forecasters now project it to hit $42 billion by 2030. According to Conferbot's 2026 chatbot report, AI-driven chatbots already account for 68% of that market — a jump from 40% in 2023. Buyers are not asking whether to deploy a bot. They are asking why so many of them still sound like a 2017 IVR menu.

The user-side data is just as direct. According to a Forbes analysis on AI humanization, customer satisfaction with AI chatbots has risen to 72-78% in 2026, compared with 55-65% in 2023. The lift is real, but it tracks almost entirely with the bots that hold a tone, a name, and a reasonable answer for messy questions. Strip those away and you get the same complaints customer ops teams have logged for a decade: cold replies, dropped context, escalation loops.

The Importance of Your Bot's Personality

Personality is the part most product teams skip because it doesn't show up in the integration checklist. It should. A consistent persona — name, register, point of view, three or four trait words — is what lets a customer trust the bot enough to ask the second question. Without it, every reply reads like a fresh stranger answering, which is exactly how AI-generated text tends to feel by default.

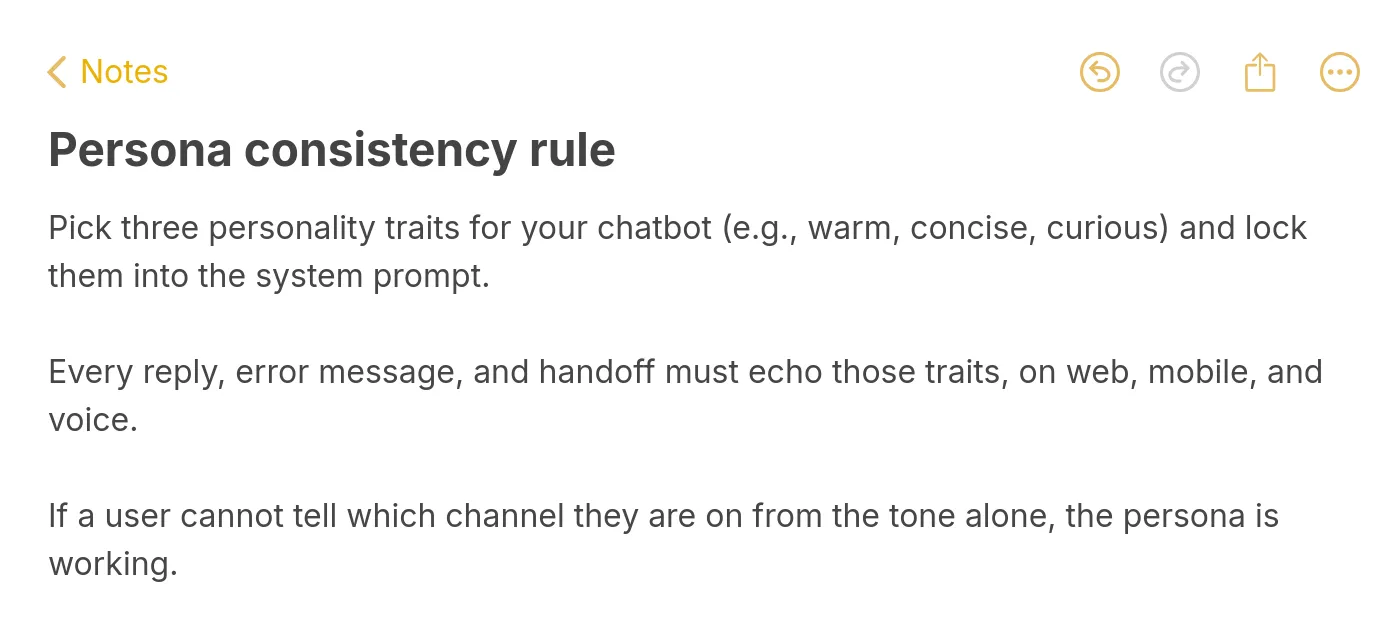

What makes a persona consistent is boring discipline: one base prompt, one tone reference doc, one short list of things the bot won't say. The brand-voice guide your marketing team wrote for blog posts is the right starting point. If your blog sounds warm and direct, your bot can't suddenly write like a legal disclaimer. The voice has to carry across the website chat widget, the in-app helper, the WhatsApp handoff, and the email follow-up. Drift between channels is the fastest way to break the illusion.

The other half of personality is restraint. A bot doesn't need 14 personality traits or a three-paragraph backstory. It needs three to five trait words ("calm, plain-spoken, slightly dry"), one or two signature phrases used sparingly, and a hard stop on emoji and exclamation marks unless the channel and audience genuinely call for them. Over-personality is just as detectable as no personality — and it usually comes from copy that tries to sound like a friend instead of a competent helper.

6 Ways to Make Chatbot Sound More Human

The six strategies below are ordered roughly by impact-per-effort — how much the change moves the perceived-human needle for the least implementation work. Persona work pays off in days. Training on real conversations takes weeks but compounds. Pick the one your bot is weakest on first.

1. Establish a Clear Persona

A clear persona is a written, version-controlled definition of who the bot is: name, role, tone register, three to five trait words, two or three signature phrases, and an explicit list of things it won't say. This is not a marketing exercise. It's the spec your base prompt and your QA reviewers both work from when a reply feels off.

How to implement:

1. Write a one-page persona doc. Name, role, register (formal / neutral / casual), three to five trait adjectives, two signature phrases, two anti-phrases ("does not say 'absolutely!', does not apologize for things outside its scope"). Keep it under 250 words so the team actually reads it.

2. Translate the doc into a base prompt. Lead with the role and trait words, then constraints, then escalation rules. Avoid stacking five "be helpful, be friendly, be warm" instructions — they collapse into mush. See our chatbot persona guide for a full template.

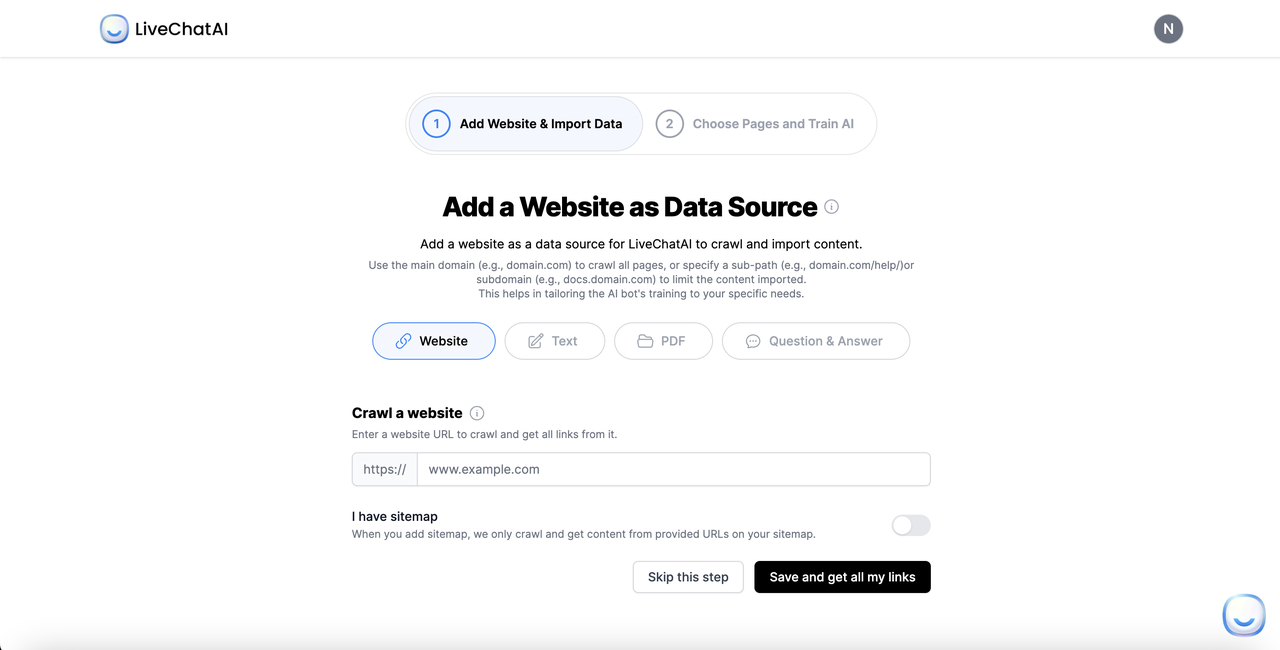

3. Pin the persona inside the system prompt, not as a one-off message — it has to survive every conversation reset. In LiveChatAI this lives in the Base Prompt field; in most platforms it's the system or instruction layer.

4. QA the first 100 conversations against the doc. Flag any reply that breaks register or leaks "as an AI language model" hedging. Tighten the prompt where you find drift.

The teams I've watched do this well treat the persona doc the way a magazine treats a style guide. When a CSAT score dips, they re-read the doc before they touch the model. The bots that feel most human in real production traffic are almost never the ones running the most expensive model — they're the ones whose persona spec is two pages, not two paragraphs. Light persona work is also the cheapest fix on this list: a tightened base prompt rolled out on a Tuesday will move user perception before the next sprint review.

2. Use Empathy and Emotional Intelligence

Empathy in a chatbot context means recognizing the emotional register of a message and responding in a way that matches it before solving. A user who types "this is the third time I've been charged" is not asking for a help-center article. They're asking to be heard for two sentences and then refunded. Bots that skip the acknowledgment and jump straight to the fix read as cold, even when the answer is correct.

How to implement:

1. Run sentiment classification on every inbound message before generation. Most modern LLMs do this implicitly, but exposing the sentiment label to the prompt ("user sentiment: frustrated") gives you cleaner control than hoping the model picks it up.

2. Write three opener templates per emotional state — neutral, frustrated, confused, urgent — and let the model pick. Keep each opener under 12 words so the bot doesn't perform empathy.

3. Acknowledge first, then solve, then verify. "I get it, that's frustrating. Let me pull up the order — can you confirm the email on the account?" is the shape. Skipping any one of the three breaks the pattern.

4. Build escalation rules that fire on sustained negative sentiment, not just on the word "agent". Three frustrated messages in a row should route to a human even if the user never asks.

According to the Forbes piece on AI humanizers, the same humanization techniques that lift chatbot CSAT into the 72-78% range can mislead users in sensitive contexts (mental health, medical advice) when the bot oversells its understanding. The honest read for B2B and e-commerce teams: empathy templates work, but they have to stop short of pretending the bot knows things it doesn't. A line like "I can see how that's stressful, but I want to loop in a human teammate before I guess on this" beats a confident wrong answer every time.

3. Vary Sentence Structure and Word Choice

Uniform sentence length is the single loudest AI tell. Default model output drifts toward 18-to-22-word declarative sentences with two subordinate clauses each. Real human writing — and real human speech — alternates: a fragment, a long sentence, a question, a four-word punch. Teaching the bot that rhythm is what makes its replies stop reading like a press release.

How to implement:

1. Add a sentence-rhythm rule to the base prompt. Something like: "Vary sentence length. Mix 5-8 word sentences with 15-25 word ones. Use the occasional fragment. Never write three same-length sentences in a row."

2. Ban the worst stiff connectors in the prompt — the formal academic transitions ("furthermore," "additionally," "in conclusion") that LLMs reach for by default. Replace with periods, "and," or nothing. This single rule changes the read of an output more than any persona tweak.

3. Use contractions by default. "Don't," "we've," "it's," "you'll." Models trained on formal corpora avoid them; force them back in.

4. Read 20 outputs aloud each week. Anything you'd never actually say out loud goes into a "fix this phrase" list and gets banned in the next prompt revision. Our chatbot tone of voice guide covers the full ban-list approach.

This strategy compounds with strategy 1. A clear persona tells the bot who it is; varied sentence structure stops it from sounding like every other LLM-powered assistant on the internet. The two together do roughly 60% of the perceived-human work — the remaining strategies are sharpening, not foundations. If you only have a sprint, spend it here.

4. Adapt to User Context and Mood

Context adaptation is what separates a chatbot from a search box. The same question — "where's my order?" — needs a different reply for a first-time buyer, a repeat customer with three open tickets, and a user who asked the same thing 90 seconds ago. A bot that responds identically in all three cases reveals that nothing is actually being read; only matched.

How to implement:

1. Pass user metadata into every prompt — order count, account age, plan tier, last interaction timestamp, current page URL. The model can't adapt to context it doesn't see.

2. Define three response modes by recency: first message ("introduce, scope, ask"), mid-conversation ("answer, verify, offer next step"), repeat question within 5 minutes ("acknowledge the repeat, change the approach, offer escalation").

3. Use page context as a tiebreaker. A user on /pricing asking "how does it work" wants product flow, not API docs. Wire the current URL into the system prompt.

4. Layer in mood-based triggers from the chat widget itself. Time on page, scroll depth, and bounce signals can fire a different opener — see our live chat triggers guide for the full set.

The honest limit on this strategy is data quality. If your CRM doesn't sync cleanly into the chat platform, context-aware replies fall back to generic ones — sometimes worse than a plain bot, because the user can tell something was supposed to happen and didn't. Start with the two or three signals you can pass reliably (account tier, current URL, last-order-status) and expand only when you trust the pipeline. Half-broken personalization reads worse than none.

5. Add Strategic Humor and Personalization

Humor is the riskiest item on this list. When it lands it makes a bot feel unmistakably human; when it misses it reads as a brand trying too hard. The rule that's worked for the LiveChatAI customers I've reviewed: humor is allowed in low-stakes turns (greetings, "I don't know" admissions, off-topic small talk) and banned in high-stakes ones (billing, refunds, outages, anything sensitive). Personalization follows the same gradient.

How to implement:

1. Pick one humor register and stay in it — dry, warm, playful, deadpan. Mixed humor reads as inconsistent and is worse than flat.

2. Restrict humor to specific conversation states in the prompt: opener, fallback ("I don't know that one — want me to find a human who does?"), and farewell. Never inside a transactional reply.

3. Personalize on facts the user already gave you in this conversation, not on stalker-grade data. "Got it, you mentioned the Pro plan earlier — that includes the analytics module" is fine. "Welcome back, [first name from the cookie]!" is uncanny.

4. A/B test humor toggles by segment. B2B enterprise traffic frequently rates humorless bots higher; consumer e-commerce traffic frequently rates the opposite. Don't guess — split-test for two weeks.

The realistic expectation is small. Humor and light personalization probably contribute 5-10% of the perceived-human lift on top of strategies 1-4 — and they can subtract from it if applied to the wrong audience. The teams that treat humor as a finishing touch (last sprint, after persona and rhythm are locked) almost always get more out of it than the teams that lead with it. The ones that lead with it usually end up shipping a chatbot that sounds like a brand mascot, which is its own problem.

6. Train on Real Customer Conversations

The single most underrated humanization move is feeding the bot your actual support history — past tickets, resolved chats, internal Slack threads where your team explained a feature to a customer. Generic intent libraries produce generic-sounding bots. Bots trained on the words your real customers and real reps use sound like the brand because they're literally repeating its patterns.

How to implement:

1. Export the last 12 months of resolved support conversations from your help desk. Strip PII. Keep the question/answer pairs intact.

2. Add your help center, product docs, and any internal "how we explain X" pages as data sources. The product docs anchor accuracy; the conversations anchor tone.

3. Tag the high-quality replies — the ones where CSAT was 5/5 and the resolution was one message — and weight them. Train the bot to mimic the patterns that already worked, not the average of every reply.

4. Re-ingest monthly. The phrases your customers use shift with seasons, releases, and incidents. A bot trained on January transcripts in October will sound a half-step behind the room.

This is the strategy with the longest payoff curve. The first week after re-ingestion, replies feel only slightly more on-brand. By week four, when the model has been queried against the new corpus enough times for the patterns to dominate retrieval, the bot starts using phrases your senior support rep would use — and that's the moment users stop noticing they're talking to software. For a deeper look at how the content-grounded approach plays out across industries, our examples of conversational AI piece walks through six setups end to end.

Common Mistakes That Make Chatbots Sound Robotic

Most of the time, the problem isn't that a team didn't apply the strategies above. It's that they layered one humanization tactic on top of five robotic defaults that drown it out. The five tells below are the ones that show up most often in the transcripts I audit.

1. Over-apologizing. "I'm so sorry to hear that, I truly apologize for the inconvenience, please accept my sincerest apologies" is three apologies in one sentence and reads as performance. Replace with one acknowledgment ("That's frustrating — let me fix it") and move to action.

2. Hedging on simple facts. "It appears that your order may possibly have been shipped" should be "Your order shipped Tuesday." If the bot knows, it should say. If it doesn't, it should say that, not bury uncertainty in modifiers.

3. Three-item parallel lists everywhere. AI defaults to triads ("fast, reliable, and easy to use") even when the underlying point has two or four parts. Force the bot to vary count: sometimes one, sometimes two, sometimes five. Anything but a metronome of three.

4. The "as an AI assistant" disclaimer. A bot that breaks character every other turn to remind you it's a bot is not being honest — it's being annoying. Disclose AI status once, in the opener or in the chat widget label, and then get on with the conversation.

5. Ignoring the channel. A chat widget reply formatted with three headers, a bulleted list, and a horizontal rule reads as a help article pasted into a conversation. Match the channel: chat is short paragraphs, voice is one sentence at a time, email can be longer. The same content in the wrong format breaks the human illusion faster than any word choice.

How to Measure if Your Chatbot Sounds Human

Vibes-based reviews are not a measurement system. The five signals below are the ones worth tracking weekly, and they correlate well with the perceived-human work in the strategies above.

• CSAT (or thumbs-up rate) on bot-only resolutions. The cleanest signal. Filter out conversations that escalated to a human and watch the bot-only score. A humanized bot typically lands 4-12 points above the baseline within 30 days.

• Escalation rate by intent. Track which intents drive escalations. If "billing" escalates 60% of the time, the bot's tone or scope is wrong on that intent specifically — not on the whole product.

• Messages-to-resolution. A human-sounding bot resolves in fewer turns because users trust the answers. Watch for the median, not the mean (one stuck conversation skews the average).

• Conversation depth. The number of turns per session before the user disengages. Robotic bots get one or two messages; humanized ones get four to seven before resolution or escalation. Depth is a leading indicator that something feels right.

• AI-detector pass rate. Run a sample of 50 replies through GPTZero or a similar detector once a month. The goal is not to fool detectors — it's to see whether your prompt revisions are actually moving the rhythm and lexical-diversity scores the detectors track.

Future Trends in Conversational AI for 2026

The next 12-18 months are about three shifts. Voice is the first. Voice-first chat interfaces — phone, smart speaker, in-app voice mode — are growing faster than text widgets, and the humanization rules change: prosody, pause length, and breath sounds matter as much as word choice. A bot that reads brilliantly on screen can sound stilted the moment it's spoken aloud.

The second shift is emotional-intelligence routing. Sentiment classification is moving from "label the message" to "label the trajectory of the conversation." Bots will route to humans not on a single angry message but on a sequence — a calm question, then a confused follow-up, then a flat reply — that signals quiet disengagement before the user types "agent." The teams I've talked to running early versions of this report 20-30% drops in unnecessary escalations and matched lifts in resolved-by-bot rates.

The third is multimodal context. A user uploading a screenshot, a photo of a damaged product, or a short video clip is increasingly common, and the bots that can actually reason over those inputs feel orders of magnitude more human than the ones that respond with "I can't view images." The honest caveat: multimodal handling adds latency and cost, and rolling it out without trimming the rest of the prompt usually makes overall response time worse, which kills the human-feel gain.

Underneath all three, the same content-grounding principle keeps winning. Bots that retrieve from your real corpus — docs, transcripts, internal notes — stay anchored to your brand even as the surface (voice, sentiment routing, multimodal) changes. The platforms that ship those grounding tools as a default will widen the gap on the ones that don't.

Pick One Way to Humanize Your Bot This Week

Six strategies is a list, not a roadmap. The honest play is to pick one — the one your bot is weakest on — and ship it before the next sprint review. If your bot has no written persona, start there: a one-page doc and a tightened base prompt will move CSAT inside two weeks. If the persona is solid but replies still feel mechanical, attack sentence rhythm: ban the stiff academic connectors, force contractions, and read 20 outputs aloud. If both are in good shape, the next move is almost always training on real conversations — slow to land, but the only one that compounds. The bots that sound human in production aren't the ones running every strategy at once. They're the ones whose team picked one, finished it, and then picked the next one.

Frequently Asked Questions

What ChatGPT prompts make it sound more human?

The prompts that work best for chatbot output (not standalone ChatGPT writing) define a persona, ban the stiff academic connectors LLMs default to, force contractions, require sentence-length variation, and restrict humor to specific conversation states. A typical opener: "You are [name], a [role] who is [3-5 trait words]. Vary sentence length: mix 5-8 word sentences with 15-25 word ones. Use contractions. Never write three same-length sentences in a row. Acknowledge emotion before solving." Pin it in the system prompt so it survives every reset.

How do you make AI sound more human in a prompt?

The reliable pattern is rules-not-adjectives. Vague instructions like "sound natural and friendly" produce mush; specific bans and forced behaviors produce real change. Tell the model what not to do (no triadic lists, no "as an AI" hedging, no over-apologies, no five-syllable Latinate words when a one-syllable Anglo-Saxon one fits), and require behaviors it skips by default (contractions, fragments, rhetorical questions, varied sentence length). Combine with a one-paragraph persona at the top of the prompt and you'll see the lift inside the first 50 outputs.

How do you measure if a chatbot sounds human?

The five signals worth tracking weekly are bot-only CSAT, escalation rate by intent, messages-to-resolution (median, not mean), conversation depth, and AI-detector pass rate on a monthly sample of 50 replies. Bot-only CSAT is the headline number — a humanized bot typically lands 4-12 points above the robotic baseline within 30 days of a persona and rhythm overhaul. AI-detector scores are a process metric, not a goal: rising scores mean your prompt revisions are moving the lexical patterns detectors track, which is what you want.

For further reading, you might be interested in the following:

• Chatbot Persona: What It Is, How to Create One & Examples

• How to Choose Your Chatbot Tone of Voice — 8 Vital Steps