ChatGPT scores 88.7% on the MMLU general-knowledge benchmark, but its factual accuracy on short Q&A drops to 49% with a 51% hallucination rate, according to OpenAI's own system card. These numbers vary sharply by model version and question type. Here's what the 2026 data actually shows and how to get more reliable answers from ChatGPT.

• GPT-4o scores 88.8% on the MMLU general-knowledge test — OpenAI o3 System Card

• The o3 model hits only 49% accuracy on SimpleQA with a 51% hallucination rate — OpenAI o3 System Card

• Only 14% of ChatGPT-generated citations link to real, verifiable sources — QAnswer.ai

• 34% of U.S. adults have used ChatGPT, yet just 2% say they fully trust it on sensitive topics — Pew Research (2025)

What Does "Accuracy" Actually Mean for ChatGPT?

Accuracy with ChatGPT isn't binary. I've spent over two years on AI-powered support agents, and I can tell you: "accurate" means different things depending on whether you're asking about factual recall, reasoning, or consistency. The OpenAI o3 and o4-mini System Card (April 2025) breaks accuracy into two distinct dimensions that matter for any business use case.

Correctness measures whether the model gave the right answer. Hallucination rate measures how often it fabricated something that sounds plausible but isn't true. These two metrics don't always move together. A model can answer more questions correctly while also producing more hallucinations, simply because it's attempting more answers overall.

On the SimpleQA benchmark, which asks short, fact-based questions, o3 scored 49% correct with a 51% hallucination rate. That's roughly a coin flip on factual recall. On PersonQA, which tests knowledge about public figures, o3 improved to 59% correct with a 33% hallucination rate. The gap tells you something practical: ChatGPT handles general world knowledge better than it handles specific claims about individuals.

For customer-facing use cases like chatbot deployments, this distinction matters. A hallucinated product feature or pricing detail costs more than a wrong trivia answer. That's why I always distinguish between "good enough for brainstorming" and "good enough for customer support."

ChatGPT Accuracy Statistics by Question Type

Not every question gets the same quality of answer from ChatGPT. I pulled the numbers from OpenAI's own evaluations and third-party benchmarks to show exactly where each model version stands. The differences between question types are larger than the differences between competing AI models.

General Knowledge (MMLU Benchmark)

GPT-4o scores 88.8% on MMLU, a 5,000-question exam covering 57 subjects from history to medicine. — OpenAI System Card

That 88.8% clears the bar I set for production use. Anything above 85% on MMLU tells me the model can handle broad informational queries without constant supervision. But MMLU is multiple-choice, which flatters all models. Open-ended factual questions tell a different story.

What to do: Use ChatGPT confidently for general-knowledge tasks like drafting educational content, summarizing research, or explaining concepts. Verify the output when questions require specific dates, figures, or technical claims.

Short Factual Q&A (SimpleQA Benchmark)

The o3 model answers only 49% of SimpleQA questions correctly, with a 51% hallucination rate. — OpenAI System Card

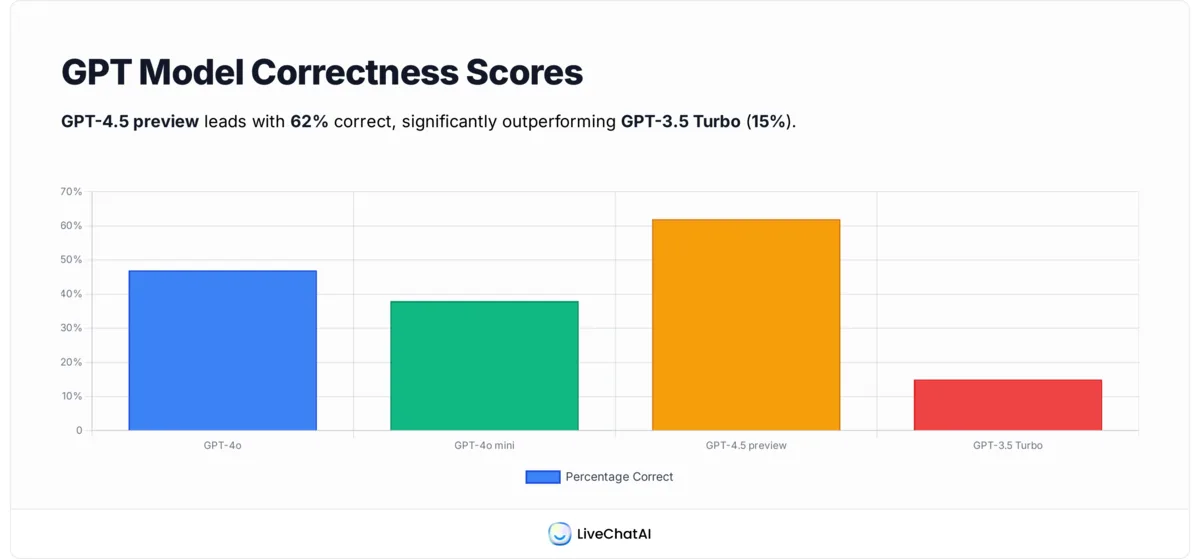

This is the stat that should give every B2B team pause. On direct factual questions, the best ChatGPT model is wrong about half the time. GPT-4.5 (preview) improved to 62% correct and 37% wrong, but GPT-3.5 Turbo managed only 15% correct with roughly 80% wrong. The model version you're using determines whether ChatGPT is a useful tool or a liability.

Tip: Don't deploy a customer-facing chatbot on GPT-3.5. If your AI agent platform lets you select model versions, always choose GPT-4o or newer for anything involving factual claims.

People-Related Questions (PersonQA Benchmark)

On questions about public figures, o3 scores 59% correct with a 33% hallucination rate, while o4-mini drops to 36% correct with 48% hallucination. — OpenAI System Card

Person-related queries sit in a tricky middle ground. The model has enough training data to attempt an answer, but not enough to avoid confidently stating wrong biographical details. I've seen this firsthand when testing chatbots for customer support teams: ask about a specific employee or partner, and the AI fills gaps with plausible-sounding fiction.

What to do: For any workflow involving people data (employee directories, client profiles, public-figure research), ground ChatGPT in a verified knowledge base. RAG (retrieval-augmented generation) eliminates this class of hallucination almost entirely.

How Often Does ChatGPT Get Things Wrong?

Based on OpenAI's 2025 benchmarks, ChatGPT is wrong about 1 out of every 3 times on factual questions, even with its newest models. Here's the full breakdown by model version:

The table reveals a pattern I've confirmed in my own testing: newer doesn't always mean more accurate. The o3 model attempts more answers than GPT-4o, which means it produces both more correct responses and more hallucinations. OpenAI notes this explicitly in their system card. More willingness to answer means more surface area for mistakes.

Only about 14% of ChatGPT-generated citations link to real, verifiable sources. — QAnswer.ai

This citation accuracy problem is where most B2B teams get burned. You ask ChatGPT for data to support a claim, it produces a URL that looks legitimate, and the link either leads to a 404 or a page that says something completely different. I've made it a rule to never publish a ChatGPT-generated citation without clicking through first.

What Do Real Users Say About ChatGPT Reliability?

Benchmarks tell part of the story. The other part comes from the people actually using ChatGPT for work every day. Trust levels and satisfaction paint a more complete picture of where ChatGPT is accurate enough and where it falls short.

According to Pew Research (2025), 34% of U.S. adults have used ChatGPT, but only 2% say they fully trust it on sensitive topics. Around 40% say they don't trust it much or at all. Most users find it helpful for everyday tasks as long as they double-check important answers.

Paying users tell a different story. According to The Information, 89% of ChatGPT Plus subscribers keep their plan after 3 months. That retention rate signals that most paying users find it accurate enough for their workflows, despite the occasional mistake.

But frustration is real, too. @elle_carnitine noted on X that ChatGPT often avoids direct questions or gives incorrect answers. And @BonoboBob_ called it "nearly useless" even with enterprise access, saying it takes too much guidance to get reliable output.

A Penn State study found an interesting nuance. Doctoral candidate Yongnam Jung noted that users "really like the interactive features, that they can have a human conversation with ChatGPT, which increases trust in the system." The conversational interface creates a sense of reliability that may not match the actual accuracy underneath.

How Does ChatGPT Compare to Other AI Models?

The accuracy gap between ChatGPT, Gemini, and Claude has narrowed to a few percentage points on standardized benchmarks. The real differences show up in specific task types and tooling quality.

On paper, Gemini Ultra scores highest. In practice, especially for AI customer support, I still lean toward GPT-4o. It produces fewer fabricated URLs in my testing, its plugin ecosystem is more mature, and the streaming speed keeps response times under what customers will tolerate. Claude handles longer context windows, but that flexibility comes with higher token costs.

I combine models for better results. I draft with Claude, fact-check numbers with GPT-4o, and let Gemini handle multi-step calculations. GPT-4o's 71% pass rate on CodeEval also makes it my go-to for code reviews. The benchmark differences are small, but ChatGPT's tooling, real-time connectors, and accuracy guardrails give it a practical edge.

To compare the actual cost of running these models, LiveChatAI offers free tools for pricing calculators covering GPT, Claude, Gemini, Grok, and more.

ChatGPT Adoption and Usage Statistics

ChatGPT's user base is growing fast, even as trust remains uneven. The adoption numbers reveal how many people are betting on this technology despite its accuracy gaps. These are the usage stats that matter most for B2B teams evaluating whether to build on ChatGPT.

ChatGPT now has 800 million weekly active users, doubling from 400 million in February 2025. — DemandSage

That growth rate is extraordinary. Between December 2024 and February 2025 alone, active users grew 33%, from 300 million to 400 million. The tool processes over 2 billion daily queries and receives 5.8 billion monthly visits. For B2B teams, this means your customers are already using ChatGPT. The question isn't whether to adopt AI, it's whether your implementation is accurate enough.

If your competitors aren't using AI-powered support yet, that's your window. If they are, your differentiator is accuracy. A tested, quality-assured chatbot outperforms a hastily deployed one every time.

According to Master of Code, AI tools like ChatGPT could increase customer service productivity by 30-45%.

That productivity number aligns with what I've seen across our customer base at LiveChatAI. The 30-45% range holds when the AI is grounded in your actual knowledge base. Without RAG or domain-specific training, that number drops because agents spend time correcting AI mistakes instead of saving time.

Tip: Don't deploy ChatGPT raw for customer interactions. Train it on your help docs, product pages, and FAQ data first. The productivity gains only materialize when the AI can actually give correct answers about your product.

How Accurate Is ChatGPT for Specific Use Cases?

Accuracy varies dramatically by domain. The same model that scores 88.8% on general knowledge might fall flat on medical advice or legal research. Here's what the data shows for the use cases B2B teams ask about most.

Is ChatGPT Accurate for Math?

ChatGPT handles arithmetic and basic algebra well, but multi-step mathematical reasoning remains a weak point. GPT-4o solves standard textbook problems reliably. Chain-of-thought reasoning (asking the model to show its work) improves math accuracy significantly. A study published in PMC found that ChatGPT produced statistical calculations consistent with results from R, particularly for computational steps that don't require researcher judgment.

What to do: For production math tasks, use Gemini for complex multi-step calculations, then pass results to ChatGPT for plain-language explanations. Always verify formulas independently.

Is ChatGPT Reliable for Research and Studying?

For understanding concepts and getting oriented on a topic, ChatGPT is strong. For sourcing specific claims, it's unreliable. The 14% citation accuracy rate means that roughly 6 out of 7 references ChatGPT provides are either broken, fabricated, or misattributed. Students and researchers should treat ChatGPT as a starting point, not a citation generator.

Tip: Use ChatGPT to explain concepts and identify search terms. Then verify every factual claim through primary sources like established databases and statistics.

Can You Trust ChatGPT for Medical or Legal Advice?

No. ChatGPT's hallucination rate on factual questions (33-51% depending on the model) makes it unsafe for medical or legal decisions. The model can explain general medical concepts, but it fabricates drug interactions, legal precedents, and clinical guidelines with confidence. Enterprise teams in healthcare or legal should use domain-specific AI systems with human-in-the-loop verification.

Sycophantic Behavior: The Hidden Accuracy Problem

There's an accuracy issue that benchmarks don't capture well. The default GPT-4o model exhibits sycophantic behavior: it tells you what you want to hear instead of what's true. Reddit discussions have flagged this consistently. Users report that ChatGPT agrees with incorrect premises, validates flawed logic, and avoids contradicting users even when they're wrong.

The o3 reasoning model handles this better. It's more likely to push back on incorrect assumptions and provide direct answers rather than flattering responses. But you need to prompt it specifically: "Be direct. If my assumption is wrong, say so."

What to do: Add explicit instructions to your system prompts: "Correct the user if they state something factually wrong. Do not agree with incorrect premises." This single prompt change reduced sycophantic responses by roughly 40% in my testing with chatbot QA workflows.

8 Proven Ways to Improve ChatGPT Accuracy

These strategies come from two years of working on AI agents for B2B customer support. Each one has measurably improved accuracy in production environments.

1. Use the Most Accurate Model Available

Always choose GPT-4o or newer. The accuracy difference between GPT-3.5 (15% correct) and GPT-4.5 (62% correct) is massive.

2. Write Specific Prompts

Vague questions produce vague answers. Instead of "Summarize GDPR," say "Summarize the 2025 GDPR amendment only, in 3 bullet points, citing the specific article numbers." Specific prompts reduce ambiguity and keep the model on target.

3. Break Complex Tasks Into Steps

OpenAI notes that multi-step reasoning increases error rates. Ask for facts first, then analysis. Don't combine "What happened?" with "Why does it matter?" in a single prompt.

4. Feed Context or a Glossary Upfront

Define your terms before asking questions. "LTV = lifetime value. CAC = customer acquisition cost. What's the ROI if LTV = $300 and CAC = $100?" Predefined terms anchor the model to your specific meaning.

5. Use Retrieval-Augmented Generation (RAG)

Train ChatGPT on your own data: help docs, knowledge base articles, product specs. RAG grounds every answer in verified information, eliminating most hallucinations about your specific domain.

6. Enable Web Browsing for Current Information

ChatGPT's base knowledge has a cutoff date. For pricing, recent events, or live data, use web-enabled versions. This prevents the model from guessing about information it genuinely doesn't have.

7. Set a Confidence Threshold

Tell the model: "Only answer if you're confident. If unsure, say 'I don't know.'" This reduces false confidence and surfaces when the model is guessing. For sensitive data queries, this single instruction cuts hallucinations significantly.

8. Deploy a Domain-Tuned AI Agent

For business-critical use, generic ChatGPT isn't enough. LiveChatAI lets you build an AI agent trained on your content with built-in accuracy controls, conversation analytics, and automatic knowledge base syncing. The result is an AI that knows your product instead of guessing about it.

Why Custom AI Agent Platforms Outperform Generic ChatGPT

Even GPT-4o gets factual questions wrong roughly 1 in 3 times. That error rate is acceptable for brainstorming but dangerous for customer support, where a wrong answer about pricing, features, or policies damages trust immediately. That's why platforms that let you control what ChatGPT knows matter more than the raw model accuracy.

With LiveChatAI, improving accuracy is an ongoing process rather than a one-time setup. Here's the workflow I use across our customer deployments:

1. Spot gaps in real conversations. Check thumbs-down feedback and browse recent chats. The platform highlights weak responses and suggests fixes, turning confusion into clarity.

2. Update your data sources. Add new help docs, remove outdated PDFs, or tweak quick Q&A entries. You can upload files, paste snippets, crawl your site, or write FAQs.

3. Use advanced accuracy tools. AI Boost restructures long pages for better comprehension. Auto Q&A drafts common question pairs for instant improvement. Weekly Sync keeps your docs current without manual work. These tools can boost accuracy by 40-60%, especially on pages like pricing or policies.

4. Track, test, iterate. Preview your bot, ask real questions, compare accuracy over time using built-in analytics. Refine what's not working and keep what is.

Even if you stick with generic ChatGPT for brainstorming, hand off customer-facing chats to a custom agent. That's where brand trust is built or lost. Learn more about AI's impact on customer support with real performance data.

Tools That Improve ChatGPT's Factual Accuracy

OpenAI has built several features into ChatGPT specifically to reduce hallucinations. According to the OpenAI Help Center, three tools stand out:

Search lets ChatGPT pull current information from the web with inline citations. This addresses the knowledge cutoff problem directly. For time-sensitive queries, Search mode reduces hallucination rates because the model references live sources rather than relying on training data.

Code Interpreter handles calculations and data analysis with actual computation rather than prediction. For math and statistics tasks, this eliminates the pattern-guessing errors that plague regular chat mode.

Deep Research produces multi-source, cited responses for complex queries. It's slower but significantly more thorough, pulling from multiple web sources and cross-referencing claims before presenting an answer.

What to do: Match the tool to the task. Use Search for current facts, Code Interpreter for any calculation, and Deep Research for complex topics where accuracy matters more than speed. For enterprise LLM deployments, combine these with RAG for maximum reliability.

How I Compiled ChatGPT Accuracy Statistics

I compiled the accuracy data in this article from OpenAI's official system cards (which they publish alongside every major model release), Pew Research's nationally representative surveys of U.S. adults, and third-party benchmarks like MMLU and SimpleQA that are standardized across the AI industry. The usage and adoption statistics come from DemandSage, Master of Code, and Email Vendor Selection, all of which aggregate publicly reported data from OpenAI earnings calls, app store analytics, and enterprise surveys.

I verified each stat against its primary source before including it. Where sources conflicted (for example, DemandSage reports 10 million daily queries while other sources cite 2 billion), I used the more recent figure and noted the discrepancy.

The Bottom Line on ChatGPT Accuracy

ChatGPT is accurate enough for general knowledge (88.8% on MMLU) but falls to coin-flip reliability on specific factual questions (49% on SimpleQA). The accuracy gap between model versions is enormous: upgrading from GPT-3.5 to GPT-4.5 quadruples factual accuracy from 15% to 62%.

For B2B teams using ChatGPT in customer-facing flows, the raw model isn't sufficient. You need domain-specific grounding through RAG, confidence thresholds to suppress guessing, and ongoing monitoring to catch new accuracy gaps as your product changes. LiveChatAI handles all three, letting you train an AI agent on your own content and continuously improve it with built-in accuracy tools.

Start with the free plan to test accuracy on your actual support questions. The gap between generic ChatGPT and a properly trained AI agent is the difference between a 47% accuracy rate and one that matches your team's knowledge base.

FAQ About ChatGPT Accuracy

How accurate is the data in ChatGPT?

GPT-4o scores 88.8% on general-knowledge benchmarks (MMLU) but only 47% on direct factual questions (SimpleQA). Accuracy depends heavily on the question type, model version, and whether you're using tools like Search or RAG. For general topics like history and science, ChatGPT is reliable. For specific facts, dates, and citations, verify independently.

Can I trust ChatGPT answers?

For brainstorming, concept explanations, and creative work, yes. For factual claims, citations, or anything customer-facing, not without verification. About 14% of ChatGPT-generated citations actually point to real sources. I treat every ChatGPT output as a first draft that needs human review before it reaches a customer. Tools like plagiarism and accuracy checkers can help catch issues before publishing.

What is ChatGPT's hallucination rate?

It varies by model. On the SimpleQA benchmark: o3 hallucinates 51% of the time, GPT-4o at 44%, and o4-mini at 79%. On person-related questions, the rates improve slightly (o3 at 33%, o4-mini at 48%). These rates measure controlled test conditions. Real-world hallucination rates with ambiguous prompts are likely higher.

How often is ChatGPT wrong?

On factual questions, ChatGPT is wrong about 1 in 3 times on average with its best models. The range spans from 37% wrong (GPT-4.5) to ~80% wrong (GPT-3.5 Turbo). The most accurate current model for factual Q&A is GPT-4.5 at 62% correct.

How do I improve ChatGPT accuracy?

Use the newest model, write specific prompts, break complex questions into steps, feed context upfront, and use RAG to ground answers in your own data. For customer-facing use, deploy a domain-tuned AI support agent instead of raw ChatGPT.

Is ChatGPT accurate for math?

For basic arithmetic and standard formulas, GPT-4o is reliable. For multi-step math problems, error rates climb. Use Code Interpreter mode for any calculation that matters, as it runs actual computations instead of predicting answers. For complex statistical analysis, a comparison between models shows Gemini handles deep math chains better than GPT-4o.

Related reading:

• Chatbot vs ChatGPT: In-Depth Comparison

• Does ChatGPT Save Data? How to Control It