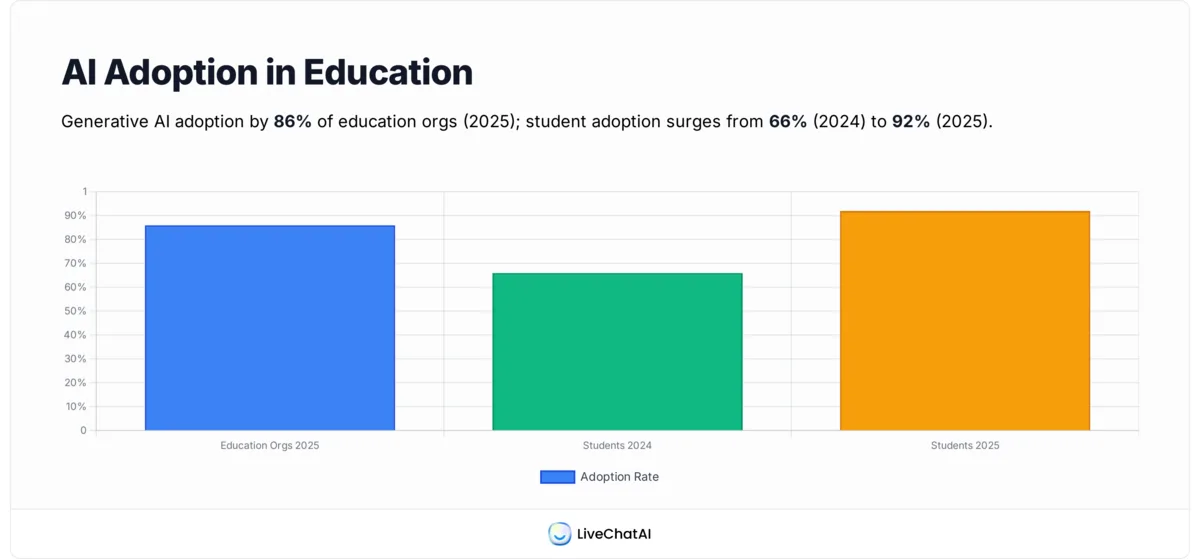

Generative AI moved into classrooms faster than any technology I've covered in eight years of writing about SaaS. By 2025, 86% of education organizations reported using generative AI, the highest adoption rate of any sector tracked by Microsoft. A machine learning chatbot in educational institutions is the workhorse behind that shift, fielding the questions, applications, and tutoring prompts that used to clog inboxes and front desks. This guide explains how it works, where it pays off, and how to roll one out without tripping FERPA.

A machine learning chatbot in educational institutions is a conversational AI system that uses natural language processing and continuous learning loops to answer student, faculty, and parent questions about academics, admissions, financial aid, and campus services. Unlike rule-based bots, it improves with every conversation and can be trained on a school's own course catalogs, syllabi, and policy documents.

What Is a Machine Learning Chatbot in Educational Institutions?

A machine learning chatbot in educational institutions is conversational software trained on a school's own knowledge base, course catalogs, syllabi, advising notes, financial aid rules, and student handbooks, that responds to free-form questions in natural language. It pulls answers from that material, classifies what the user is actually asking, and generates a reply in the user's preferred tone and language. Then it keeps learning. Every conversation feeds back into the model so misses get fewer over time.

The distinction from older rule-based bots matters. A rule-based bot answers "What's the deadline for fall registration?" only if a developer wrote that exact phrase into a decision tree. Ask "When do I have to sign up for fall classes?" and it stalls. A machine learning chatbot recognizes both as the same intent, finds the registration page in its training corpus, and answers in plain English. The mechanism stacks three pieces: NLP for parsing the question, intent classification for matching it to a known concept, and a generation layer (often retrieval-augmented) that drafts the reply from approved school content.

Adoption tracks the upgrade curve. Per a Codegnan analysis of 2025 student tech surveys, student AI use jumped from 66% in 2024 to 92% in 2025, the largest year-over-year rise the firm has measured. That kind of demand is why universities have stopped piloting and started procuring. Schools also like that ML chatbots scale without proportional headcount. One trained model can field tens of thousands of questions a week without overtime, and unlike a hired staffer it doesn't forget the new add/drop policy two weeks after onboarding.

How ML Chatbots Work in Education

The pipeline has four moving parts: ingestion, intent classification, response generation, and a learning loop. Ingestion is where you load the school's source material into the system, course catalogs, syllabi, FAQs from the registrar, financial aid award letters, IT helpdesk articles, and academic policy PDFs. Better-built bots index this with embeddings so the system understands semantic similarity, not just keyword overlap.

When a student asks something, the model classifies the intent (registration, billing, advising, IT, mental health, etc.), retrieves the most relevant chunks of school content, and uses a generation layer to draft an answer grounded in those chunks. Retrieval-augmented generation (RAG) is what keeps responses tied to your actual policies instead of hallucinating internet trivia. The learning loop closes the cycle: every flagged answer, escalation to a human, or thumbs-down rating becomes training data for the next model refresh. A student support desk built on this pattern, similar to an AI chatbot for education running on a school site, gets measurably better with every academic term it operates.

Benefits of ML Chatbots for Students and Teachers

The benefits show up on both sides of the lectern. Students get faster, more personal answers. Teachers and staff get back hours that used to disappear into repetitive Q&A. Below are the seven payoffs schools mention most:

1. 24/7 student support: Office hours don't cover the 11 p.m. panic before a deadline. An ML chatbot does. Students can ask about a missing transcript, a course override, or a financial aid disbursement at any hour and get a grounded answer pulled from official school documents. This is the single most cited benefit in the pilots I've reviewed.

2. Personalized learning paths: Because the bot tracks a student's history, courses taken, topics they've struggled with, support tickets opened, it can recommend tailored resources instead of generic ones. A first-year stuck on linear algebra gets different practice prompts than a junior reviewing for a finance midterm.

3. Reduced administrative load on teachers: Routine questions about syllabi, due dates, late-policy rules, and grading rubrics often consume half of an instructor's email week. Routing those to a bot trained on the syllabus pushes that workload into a self-service channel, freeing faculty for office hours that actually matter. A Gallup early-2026 survey of U.S. teachers found that those using AI weekly save the equivalent of a full workday each week.

4. Multilingual support: Modern ML chatbots handle 50+ languages out of the box. For schools enrolling international students or multilingual K-12 districts, that means a parent can ask about field trip permission in Spanish or Mandarin and get an accurate reply without a human translator on call.

5. Faster onboarding for new students: Orientation week is information overload. A chatbot embedded in the new-student portal can answer "How do I activate my campus card?" or "Where do I get my parking permit?" the moment a student asks instead of forcing them to email three offices.

6. Data-driven insights for educators: Aggregate chatbot transcripts (anonymized) reveal what's confusing students at scale. If 40% of registration questions are about waitlist mechanics, that's a signal to fix the website copy or run a targeted explainer video.

7. Cost savings vs human support staff: Smaller schools rarely afford 24/7 human coverage. A well-trained bot deflects the routine 60–80% of inquiries, letting limited staff focus on edge cases. The math is what's pulling community colleges and regional universities into the market alongside flagship R1s.

8 Use Cases for Machine Learning Chatbots in Education

Here's where the abstract value turns concrete. The eight use cases below are the deployments I see most often in the field, ordered roughly by ease of implementation. Each one ships value within a single semester if you scope it right.

1. Admissions and Enrollment Help Desk

Prospective students ask the same 30 questions on repeat: application deadlines, required documents, transfer credit policies, tuition deposits, housing applications. An admissions chatbot fields those at any hour, freeing counselors to actually counsel. It's the most common starter project because the source material (the admissions website plus a few policy PDFs) is already structured.

1. Map the top 50 questions: Pull six months of admissions email and live-chat transcripts. The Pareto pattern is real, the top 50 questions cover roughly 80% of volume.

2. Centralize the source material: Build a single document set the bot trains on, application requirements, deadlines by program, fee schedules, financial aid basics. Conflicting answers across pages are the #1 cause of bot errors here.

3. Wire a human handoff: Anything the bot can't answer with confidence goes to an admissions counselor with full conversation context. Don't make the student re-explain.

4. Pilot during peak season: Launch 6 weeks before application deadlines so you have meaningful volume to learn from.

Georgia State's Pounce chatbot, the canonical case in this category, reduced summer melt (admitted students who don't enroll) by 21% according to the university's own reporting. That's tens of thousands of additional tuition dollars retained per cohort, with the chatbot handling questions that used to die in voicemail. For a deeper look at this kind of frontline pattern, our roundup of real-world chatbot use cases covers analogous deployments outside education.

2. 24/7 Student Q&A on Coursework

Once a student is enrolled, the questions don't stop. They shift to "What's the late penalty?", "Can I get an extension?", "Where's the reading list?". An ML chatbot trained on the LMS contents (Canvas, Moodle, Blackboard) answers all of these from the actual syllabus rather than a faculty member's memory of what they wrote in August.

1. Pull from the LMS via API: Most major LMS platforms expose syllabi, due dates, and announcements through APIs. Connect the bot directly so it never goes stale.

2. Scope to one course or program first: A pilot with 3-5 instructor volunteers beats a campus-wide rollout that nobody owns.

3. Add a "talk to instructor" button: The bot's job is to answer policy questions, not replace teaching. Make escalation one click away.

An Ohio State's chatbot review noted that Labadze et al. (2023) found students appreciated chatbot support but still preferred human guidance for complex issues, so positioning matters. Frame the bot as a 24/7 syllabus reader, not a substitute for professor office hours, and the adoption curve is steeper. Our explainer on FAQ chatbots walks through the same architecture pattern in more depth.

3. Personalized Tutoring and Practice

This is the use case furthest along the personalization curve. ML tutoring chatbots generate practice problems, walk students through solutions step by step, and adapt difficulty based on past performance. Khanmigo (Khan Academy's AI tutor) and Carnegie Learning's MATHia are the standard reference points.

1. Pick one subject to start: Math and intro programming have the cleanest right/wrong feedback signals, which makes the model easier to evaluate.

2. Ground the bot in your course materials: Don't let it invent problems off the open web. Train on textbook content, problem banks, and worked examples your instructors actually approve.

3. Track per-student progress: The bot's value compounds when it remembers what a student got wrong last week and surfaces it again this week.

4. Loop in faculty for content review: Have instructors spot-check 50 bot-generated explanations per term to keep accuracy honest.

A Stanford HAI 2026 AI Index report on the state of AI in education highlights tutoring as the single highest-impact area for measured learning gains, particularly when the bot is paired with a human teacher rather than positioned as a replacement. Schools that combine the two see the steepest improvement curves on standardized assessments. For more on the personalization architecture, see our guide to creating custom AI chatbot characters, which covers the same persona and grounding work.

4. Course Recommendation and Career Guidance

Pre-registration advising is a perennial bottleneck. Advisors at large public universities often carry caseloads of 300+ students, which means most students get 15 minutes of advising per term, max. An ML chatbot can pre-screen the routine recommendations ("based on your major and completed credits, here are the 6 courses you can take next semester") and reserve human advisors for the harder conversations.

1. Connect to the SIS: The bot needs access to the student's transcript, declared major, and degree audit data to give useful recommendations. Read-only API access is enough.

2. Build the recommendation logic with advisors, not just engineers: Encode the implicit knowledge advisors use ("don't take orgo and physics 2 the same semester") into the bot's prompt rules.

3. Always surface the human option: First-gen students especially benefit from a real conversation. Don't bury the "book an appointment" link.

A 2026 Engageli analysis of EdTech adoption notes that institutions deploying advising chatbots cut average wait times for academic guidance from 9 days to under 24 hours, with student satisfaction scores rising by double digits in pilot programs. The bot doesn't replace the advisor, it triages the easy stuff so the advisor can spend more time on the students who actually need depth.

5. Financial Aid and Scholarship Navigator

Financial aid is the area where students struggle most and ask the least, often because they don't know what they don't know. An ML chatbot in the financial aid portal can demystify FAFSA timelines, explain award letter line items, and flag scholarships the student is actually eligible for based on their profile.

1. Train the bot on award letter formats: Students get an award letter and have no idea what "Direct Subsidized Loan" or "Parent PLUS" means. Have the bot translate it into plain English on demand.

2. Build a scholarship matching index: Tag every internal and external scholarship with eligibility criteria so the bot can match a student to opportunities they qualify for.

3. Provide deadline reminders proactively: The bot can ping students 14 and 7 days before FAFSA renewal, scholarship deadlines, or appeal windows.

The stakes here are real money. Per DemandSage's 2026 EdTech statistics roundup, the global AI education market is on a path that mirrors the urgency of solving these access gaps, with adoption strongest in financial-aid-adjacent workflows where institutions can show retention dollars saved. For low-income students especially, a bot that catches a missed FAFSA deadline can be the difference between staying enrolled and dropping out.

6. Faculty Administrative Assistant

Faculty drown in administrative work. An ML chatbot trained on department policies, room scheduling rules, IT help desk articles, and travel reimbursement procedures can answer the questions that used to mean a 20-minute Slack thread with the department coordinator. This is one of the most underrated use cases because faculty rarely advocate for it themselves, but they benefit massively.

1. Survey faculty for top pain points: Run a 5-minute survey, "what administrative question do you ask most often?". The answers will surprise you.

2. Train on policy documents, not just FAQs: Faculty want to see the actual policy text, not a paraphrase. Build the bot to cite the source document for every answer.

3. Integrate with calendar and email: A bot that can also check room availability or pull the next faculty meeting agenda earns adoption faster than a pure Q&A bot.

An OSU Arts and Sciences review noted that faculty time saved on admin questions correlates directly with reported teaching satisfaction in early-career instructors. Less time chasing a parking pass means more time on lesson planning. For a parallel pattern in customer support workflows, our guide to chatbot automation walks through the same triage logic applied to internal service desks.

7. Mental Health and Wellness Triage (with Human Handoff)

This is the most sensitive use case and the one where you absolutely cannot ship a bot without a clinical advisor in the room. Done right, an ML chatbot can be a 24/7 first point of contact that screens for severity, routes urgent cases to a counselor or crisis line immediately, and provides peer-reviewed self-help resources for lower-acuity needs.

1. Co-design with the counseling center: Clinical staff must approve every script, escalation trigger, and resource link. This is non-negotiable.

2. Use validated screening protocols: Tools like PHQ-2 for depression and GAD-2 for anxiety are short, well-studied, and appropriate for chatbot delivery. Don't invent your own questions.

3. Hard-wire crisis escalation: Any mention of self-harm or suicide triggers an immediate handoff to a human counselor or 988 Suicide and Crisis Lifeline. Test this edge case relentlessly.

4. Be transparent about the bot's limits: Open every conversation with "I'm an AI assistant, not a therapist". Students appreciate the honesty and it sets correct expectations.

An OSU College of Arts and Sciences article on the rise of chatbots in higher education noted that wellness chatbots, when paired with rapid escalation to licensed counselors, expanded after-hours support coverage at institutions that previously had none. The bot is not the therapist. It's the night-shift triage nurse that gets students to the right human, faster.

8. Library and Research Support

Reference librarians do brilliant work, but they can't be online at 3 a.m. when a junior is panicking over a research paper due at 9. An ML chatbot trained on the library's holdings, database guides, and citation manuals handles the routine "how do I find a peer-reviewed source on X?" questions and frees librarians for the genuinely complex consultations.

1. Index your subject guides: Most academic libraries already maintain LibGuides or similar topic-by-topic resource lists. Feed those to the bot as primary training data.

2. Add a citation generator: Students burn hours wrestling with APA, MLA, Chicago, etc. A bot that drafts citations from a DOI or ISBN is an easy win.

3. Build a "find a databases" flow: Walk the student through subject area, source type, and date range to recommend the right database. This is where the bot beats a static guide.

The University of Rochester's library deployed a chatbot named Carlson, built with IBM Watson, to handle book lookups, study room reservations, and basic research questions. Usage data from similar deployments at academic libraries shows roughly 60% of inbound questions resolved by the bot without librarian involvement, with the librarian then handling the deeper consultations the bot triaged. For students, the difference is getting an answer in 20 seconds versus waiting for the reference desk to open at 9 a.m.

Examples of ML Chatbots in Education

Theory aside, here are five real, named ML chatbot deployments in education that you can study, demo, or even adapt patterns from. These aren't vendor-pitch examples, they're projects with published results.

Pounce (Georgia State University)

Pounce is the most cited education chatbot success story for a reason. Built originally with AdmitHub and now deeply integrated into Georgia State's student systems, it handles everything from admissions follow-up to financial aid reminders to academic probation outreach. The university has published outcome data showing a 21% reduction in summer melt and measurable retention gains in the populations most likely to drop out. The 2026 iteration uses generative AI for richer conversational flow while keeping the original guardrails around financial-aid accuracy.

Khanmigo (Khan Academy)

Khanmigo is Khan Academy's AI tutor, built on top of GPT-4 (and successors) with significant pedagogical scaffolding. Instead of giving direct answers, it nudges students toward solutions through Socratic questioning, mirroring the way a strong human tutor works. It's deployed at scale across K-12 districts in pilot agreements and is the most-studied "tutor as bot" example in 2025-2026 research literature.

Squirrel AI (China)

Squirrel AI is the largest AI tutoring deployment in the world by user count, operating across thousands of physical learning centers in China. Its ML model breaks subjects into thousands of micro-skills and adapts the next problem based on per-student mastery. Western researchers cite Squirrel AI as the strongest existing evidence that adaptive ML tutoring can outperform traditional classroom instruction at scale, particularly in mathematics.

Ivy Chatbot

Ivy is a higher-education chatbot platform purpose-built for university recruitment, admissions, and student services. Schools like the University of Oklahoma and Quinnipiac University have deployed Ivy on their admissions sites to handle prospective student Q&A. It's a useful reference architecture for institutions that want a turnkey vendor solution rather than building custom on top of an LLM API.

Carlson (University of Rochester)

Carlson is the University of Rochester's library chatbot, originally built with IBM Watson and named after the Carlson Science and Engineering Library. It handles book lookups, study room bookings, citation help, and research database recommendations. The model has been retrained multiple times since launch as the underlying technology improved, and it's a good example of a long-running, narrowly scoped chatbot that earned its keep through consistent, focused utility rather than splashy features.

Challenges and Solutions for Educational Chatbots

Every honest writeup of ML chatbots in education includes the hard parts. Here are the five challenges that derail more pilots than any others, and what to do about each.

1. Data privacy (FERPA, COPPA, GDPR): Student data is regulated. FERPA governs U.S. educational records, COPPA covers under-13 users, and GDPR applies to any European student. A chatbot vendor that can't sign a Data Processing Agreement and prove SOC 2 compliance is a non-starter. Insist on data residency commitments, encryption in transit and at rest, and explicit guarantees that conversations won't be used to train third-party models.

2. Hallucination risk on academic content: Generative models can invent citations, misstate policy, or confidently give a wrong deadline. The fix is grounding: every answer must trace back to a source document, and the bot should refuse (rather than guess) when confidence drops. Retrieval-augmented generation with strict source citation cuts hallucinations dramatically. For low-stakes questions you might tolerate 3% error. For financial aid or registration, you need closer to zero.

3. Bias in training data: Models trained on internet text inherit biases that show up as worse performance for non-native English speakers, lower-income students, or students from underrepresented backgrounds. Mitigation requires testing the bot's responses across demographic personas before launch and auditing transcripts quarterly for differential treatment. Don't assume the vendor has done this work, ask for the audit report.

4. Faculty resistance and adoption: Some faculty see chatbots as a step toward automating teaching. The honest answer is: a chatbot can't replace a teacher, but it can replace the email mountain that keeps teachers from teaching. Co-design pilots with willing faculty volunteers, share the transcripts so they see the value, and never frame the bot as a productivity-extractor for instructors.

5. Equity and digital divide: A chatbot that requires reliable internet and a smartphone advantages students who already have those things. Pair the chatbot with phone, in-person, and email channels so no student is forced into the digital channel. Multilingual support, voice input, and mobile-first design close the gap further but don't eliminate it.

How to Choose the Right ML Chatbot for Your Institution

Vendor selection is where most pilots succeed or stall. Six criteria matter more than the rest.

1. Education-specific track record: Generic enterprise chatbots can be retrofitted, but vendors with named higher-ed or K-12 customers and published case studies de-risk the project significantly. Ask for three references at peer institutions and actually call them.

2. Integration with your student information system and LMS: A chatbot that can't read from your SIS or LMS is a glorified FAQ page. Confirm pre-built connectors for Banner, Workday Student, PeopleSoft, Canvas, Blackboard, or whatever you run. Custom integrations add 3-6 months to launch.

3. Compliance and security posture: SOC 2 Type II, FERPA-aligned DPA, COPPA support if you serve K-12, and GDPR readiness for international students. Get the security questionnaire answered before signing anything.

4. Human handoff quality: The bot should escalate to a human with full conversation context, not dump the student into a fresh ticket queue. Test this in the demo: ask a complex question, then ask to talk to a person. If the handoff is clunky, the production experience will be worse.

5. Analytics and continuous improvement tools: You need to see what the bot is being asked, where it's failing, and how often it escalates. Vendors that give you transcript-level analytics (with appropriate privacy controls) are doing this right.

6. Total cost of ownership over 3 years: List price is one line item. Add implementation fees, integration work, ongoing content curation, and the staff time to monitor the bot. A "cheap" vendor that requires 0.5 FTE to maintain may cost more than a pricier one that runs itself.

Future Trends in AI Chatbots for Education in 2026

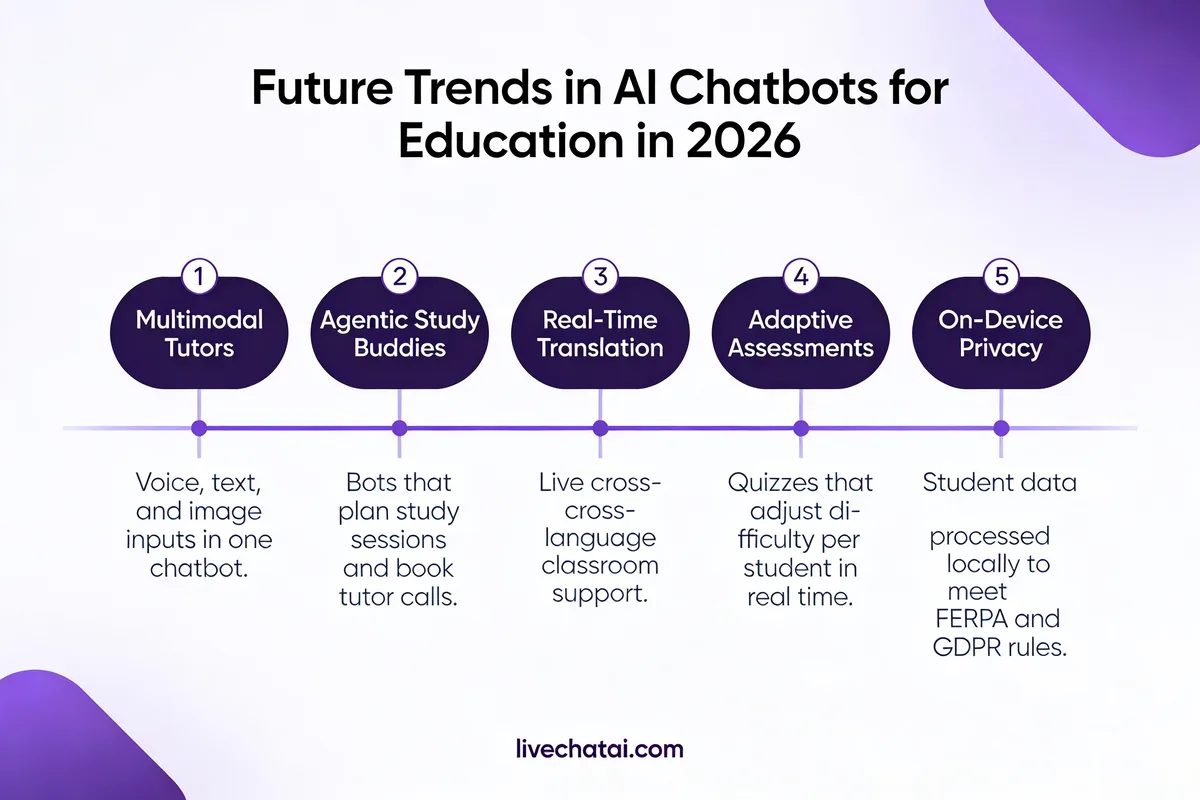

The pace of change is the story. What was a research curiosity in 2023 is shipping in pilots now, and what's in pilots now will be standard procurement by 2027. Five trends define the trajectory.

1. Agentic learning assistants: Chatbots are moving from "answer my question" to "complete this task for me". An agentic ML chatbot can register the student for next semester's courses, request a transcript, and book a counseling appointment, all in one conversation, taking actions across multiple systems on the student's behalf. Expect this to be the headline feature in 2026 procurement RFPs.

2. Multimodal voice and image tutoring: Voice-first chatbots and image input (a student photographs a calculus problem and asks for help) are becoming table stakes for tutoring deployments. The pedagogical research suggests voice interaction reduces the friction that keeps reluctant students from asking for help in the first place.

3. LMS-native integrations: Canvas, Blackboard, Moodle, and D2L are all building chatbot interfaces directly into the LMS rather than requiring a separate portal. The 2026 inflection point is when "LMS-native" stops being a vendor feature and starts being the assumed default.

4. AI tutors with emotional intelligence: Sentiment analysis layered into tutoring bots lets them recognize when a student is frustrated and adjust the response, slowing down, offering encouragement, or routing to a human. Stanford and Carnegie Mellon researchers are publishing actively on this, and early commercial implementations are already in pilot.

5. Hyper-personalization with retrieval-augmented generation: Every student's chatbot becomes a function of their own data, transcripts, advising history, learning preferences, support tickets. RAG is the technical mechanism, but the practical effect is that the bot stops feeling generic and starts feeling like it actually knows the student. The 2026 AI Index from Stanford HAI flags this as the area where the most measurable learning gains are appearing.

Pilot Your First Education Chatbot This Quarter

If you're an EdTech decision-maker reading this, here's where I'd start. First, pick one narrow use case (admissions Q&A, course registration help, or library reference work for the win), don't try to build a campus-wide bot in pilot phase. Second, scope the source material you'll train on and the team that will own ongoing curation, the bot is only as good as the documents behind it. Third, write the human-handoff and crisis-escalation protocols before you write the bot, especially for any student-facing deployment. Get those three right and a meaningful pilot ships in a single semester. Skip them and you'll spend a year debating procurement while your students keep waiting on hold.

Frequently Asked Questions

What are examples of machine learning chatbots in educational institutions?

The most-cited examples are Georgia State University's Pounce (admissions and student services, with documented retention gains), Khanmigo from Khan Academy (a Socratic AI tutor for K-12), Squirrel AI in China (the largest adaptive tutoring deployment by user count), Ivy Chatbot (a higher-ed platform used at universities like Oklahoma and Quinnipiac), and the University of Rochester's Carlson library chatbot. Each represents a different design pattern, admissions, tutoring, adaptive practice, vendor platform, and library reference, and each has published or publicly visible results worth studying before you scope your own deployment.

What is the best machine learning chatbot for educational institutions?

"Best" depends on what you're trying to solve. For admissions and enrollment workflows at U.S. universities, Element451 and Mainstay (the company that grew out of AdmitHub and Pounce) have the most named higher-ed customers. For tutoring, Khanmigo and Carnegie Learning's MATHia lead in K-12. For institutions that want to build on top of a general LLM with their own content, platforms like LiveChatAI's AI chatbot for education let you train on your own documents and ship in days rather than quarters. Match the vendor to the use case, not the brand name.

What are the opportunities and challenges of chatbots in education?

Opportunities cluster around scale, personalization, and access: a single chatbot can serve thousands of students 24/7, adapt to individual learning paths, and dramatically expand support coverage at institutions with limited staff budgets. Challenges cluster around trust and equity: data privacy under FERPA and GDPR, hallucination risk on academic content, bias in training data, faculty adoption resistance, and the digital divide that keeps the chatbot's benefits unevenly distributed. The institutions getting the most out of ML chatbots take the challenges as seriously as the opportunities and bake compliance, accuracy, and equity into procurement criteria from day one.

For further reading, you might be interested in the following:

• 23 Best AI Tools for Teachers That Will Save Time & Effort

• Self Learning AI Chatbot: The Primary Guide