Customer support teams are getting squeezed from both sides this year. Ticket volumes keep climbing, headcount budgets don't, and leadership wants AI on the roadmap yesterday. The shift to AI agents stopped being optional somewhere around mid-2025: according to Master of Code's research, 95% of customer interactions are forecast to be handled by AI by the end of this year. If you're searching for how to create an AI agent for customer support without a six-month engineering project, this guide walks the actual path I follow.

To create an AI agent for customer support, map your top intents and escalation rules, pick a no-code platform that supports retrieval-augmented generation, train it on your help center and ticket history, connect it to your CRM and helpdesk, then run a 30-day pilot. Most teams ship a working agent in two to four weeks and resolve 60-70% of routine tickets without a human.

What Is a Customer Service AI Agent?

A customer service AI agent is software that handles support conversations end-to-end. It reads a question in any channel, pulls the relevant facts from your documentation, decides whether it can answer, performs an action if needed (refund, order lookup, ticket update), and escalates when it isn't confident. The reasoning loop is what makes it an agent rather than a chatbot.

Underneath, four pieces work together. A large language model handles the natural language part — reading the question and writing a reply. A retrieval system, usually built using retrieval-augmented generation, pulls trusted snippets from your help docs so the model isn't guessing. A tool-calling layer lets the agent run functions in your stack: query an order, update a helpdesk ticket, post to Slack. And an escalation policy decides when to hand off to a human.

The difference from a rule-based bot is the absence of a script. A rule-based bot fails the moment a customer phrases something the way no one anticipated. An AI agent reads the intent, looks up the relevant doc, and answers in plain language. When it doesn't know, a well-built agent says so and escalates instead of inventing an answer.

I've spent the last year writing about AI deployments at LiveChatAI, and the pattern is consistent across customers. The agents that work are grounded in real internal documentation, have a hard rule for "don't hallucinate, escalate," and report every uncertain answer back to a human reviewer. The ones that fail are the ones treated as a chatbot replacement — bolted on without intent mapping, without escalation, without anyone owning the conversation transcripts.

If you want a deeper definitional comparison, our rule-based vs AI chatbots breakdown covers the underlying tech in more depth.

AI Agent vs AI Chatbot: Key Differences

The terms get used interchangeably, and that's part of why so many AI rollouts disappoint. A chatbot follows a deterministic flow: if the user types A, reply B; if they pick option 2, show menu 3. An AI agent reasons. It reads the question, pulls relevant context, plans a response, and can call external tools. Same channel, different machine.

Three concrete differences show up in production:

1. Scope of conversation. A chatbot handles the path you scripted. Step off the path and the conversation stalls. An AI agent handles anything its training data and tools cover, including questions you didn't anticipate. Gartner's definition of intelligent agents emphasizes goal-directed autonomy as the dividing line.

2. Action-taking. A chatbot tells the customer what to do ("To request a refund, click here"). An agent does it. Through tool-calling, the agent can issue the refund, update the order, or open a ticket on the customer's behalf. That single capability shifts deflection rates from 20-30% to the 60-70% range I see in production accounts.

3. Failure mode. A chatbot fails by getting stuck or repeating itself. An agent fails by either escalating cleanly or, if poorly designed, hallucinating a confident wrong answer. The first failure is recoverable. The second damages trust. This is why escalation policy matters more than model choice.

Our AI agent vs chatbot breakdown goes deeper on evaluation criteria. The short version: if you're handling more than two intents and want to deflect tickets rather than route them, you want an agent.

Why Use AI Agents for Customer Support in 2026

The case for adoption isn't theoretical anymore. The economics, the customer expectations, and the competitive pressure all point the same direction this year.

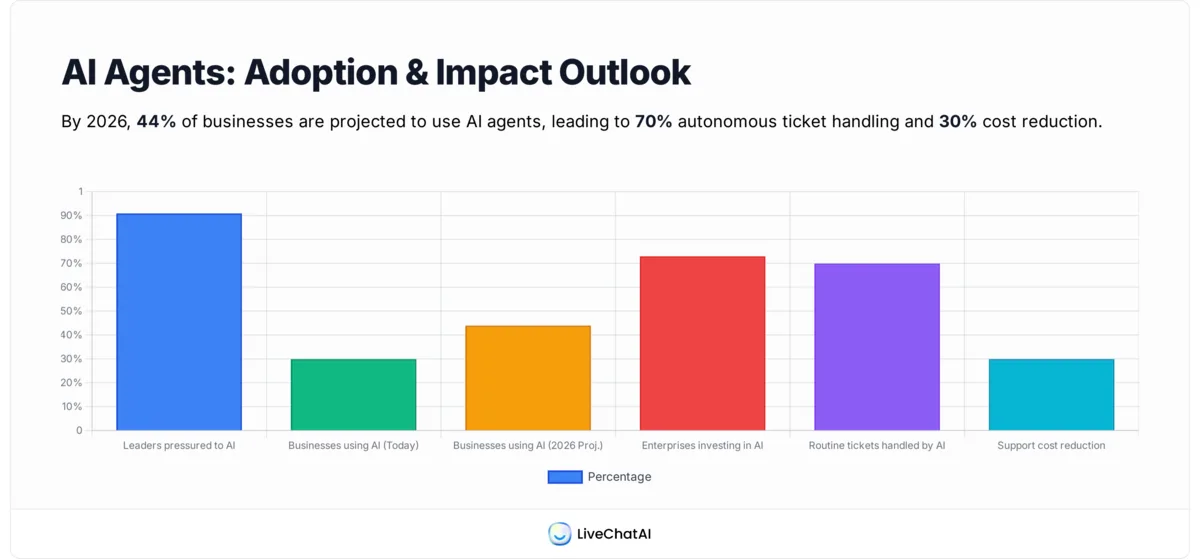

Start with executive pressure. According to Gartner's February 2026 survey, 91% of customer service leaders are being pushed to implement AI in 2026. That isn't a request for a pilot. It's a board-level mandate translated downward, and it's why "we're evaluating tools" is no longer a defensible answer.

Adoption is following. CoSupport's 2026 trend report finds that according to CoSupport's 2026 trends report, 30% of global businesses already use AI agents and 44% plan to implement them by 2026. The bell curve is shifting from early adopter to early majority inside a single year. If your competitors aren't running an agent today, half of them will be by next quarter.

The ROI side is where the conversation usually lands with a CFO. Isometrik's deployment data shows companies running AI agents cut support costs by 30% while handling 70% of routine tickets automatically. That's not a top-line projection. It's a measured outcome from teams that ran the pilot, instrumented the metrics, and kept what worked. Cost-per-ticket drops because the agent answers in seconds and burns no human minutes on password resets, order status, or refund eligibility checks.

Investment levels show this isn't a fad. Agile Soft Labs reports that over 73% of enterprises are actively investing in agentic AI systems, making it the most in-demand development skill of the year. When that much engineering hiring concentrates around one capability, the tooling improves fast — which is good news for the buyer side, since the no-code platforms now match what custom builds did 18 months ago.

The customer expectation half of the equation is more delicate. AI agents are popular among buyers when they work and toxic when they don't. Customers don't want to fight a bot for ten minutes before a human shows up. They want a fast answer or a fast handoff. The teams winning here are the ones who treat the agent as a triage layer, not a wall, and who measure deflection alongside CSAT instead of just looking at one number.

For more on the broader trend, our AI revolution in customer support statistics roundup tracks the year-over-year shift across industries.

Step-by-Step Guide to Create Your AI Support Agent

Here's the path I walk every team through. Two to four weeks end-to-end if you have your help center in reasonable shape. Longer if you're rebuilding documentation along the way.

Step 1: Map Your Top Support Intents and Escalation Rules

Pull the last 90 days of tickets. Cluster them by intent. You're looking for the 10-15 intents that account for 70-80% of volume. This is the work everyone wants to skip and the work that decides whether the agent is useful or noise.

Open your helpdesk reporting and export tickets with subject, first message, and resolution. In a spreadsheet, tag each one with a coarse intent: "order status," "refund request," "password reset," "shipping delay," "feature question." Most teams find that 12 intents cover 75% of tickets. Decide for each intent whether the agent should resolve it autonomously, gather information then escalate, or escalate immediately.

You'll know it's working when: you can show a one-page list of intents, a target action per intent, and a rough volume estimate. If you can't, you're not ready for step two.

Watch out for: tagging at too fine a grain. "Refund for order over $100 from EU customer" is not an intent — it's a branch. Keep the categories broad and let the agent handle nuance through the LLM.

Pro tip: I keep a running spreadsheet column called "where humans add value." Anything that requires empathy, judgment, or policy exceptions goes there. That column becomes the escalation rule set, and it's the single best protection against an agent overstepping.

Step 2: Choose a No-Code AI Agent Platform

You have three real options in 2026: build from scratch on a framework like LangChain, buy a no-code platform, or extend your helpdesk's native AI. For most teams under 500 employees, no-code wins on time-to-value. Custom builds make sense when you need deeply proprietary behavior or you're handling regulated data with custom hosting requirements.

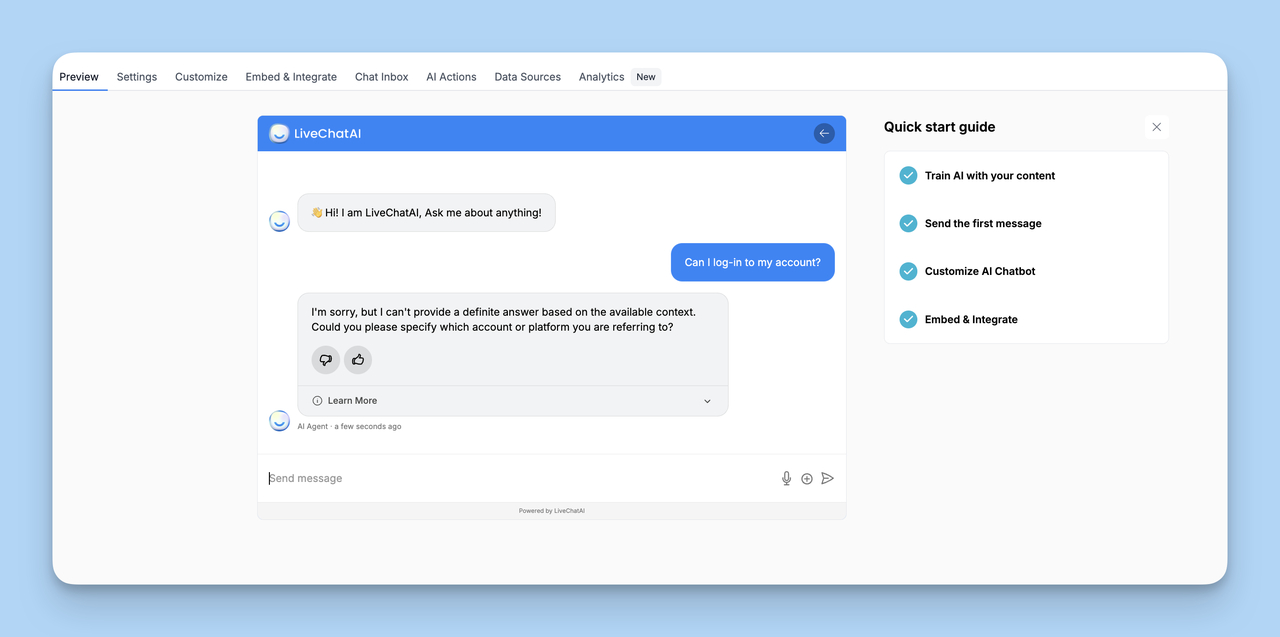

Evaluation criteria I use: knowledge base ingestion (does it accept URLs, PDFs, sitemaps, ticket exports?), action support (can it call your APIs without you writing glue code?), channel coverage (web, WhatsApp, Messenger, email), language coverage if you're multilingual, and pricing that scales with conversations rather than seats. LiveChatAI hits all of these for a no-code build, and our breakdown of AI agent builders walks through the comparisons in detail.

You'll know it's working when: you've shortlisted two platforms, run their free trials with three real tickets each, and confirmed both can ingest your knowledge base in under an hour.

Watch out for: picking on price alone. The cheapest tools usually skimp on retrieval quality, which is what determines whether your agent says smart things or weird things.

Pro tip: ask any vendor to show you their hallucination rate, not just their accuracy rate. They're different numbers and the second one is the one that gets you in trouble.

Step 3: Train on Your Help Center, Docs, and FAQs

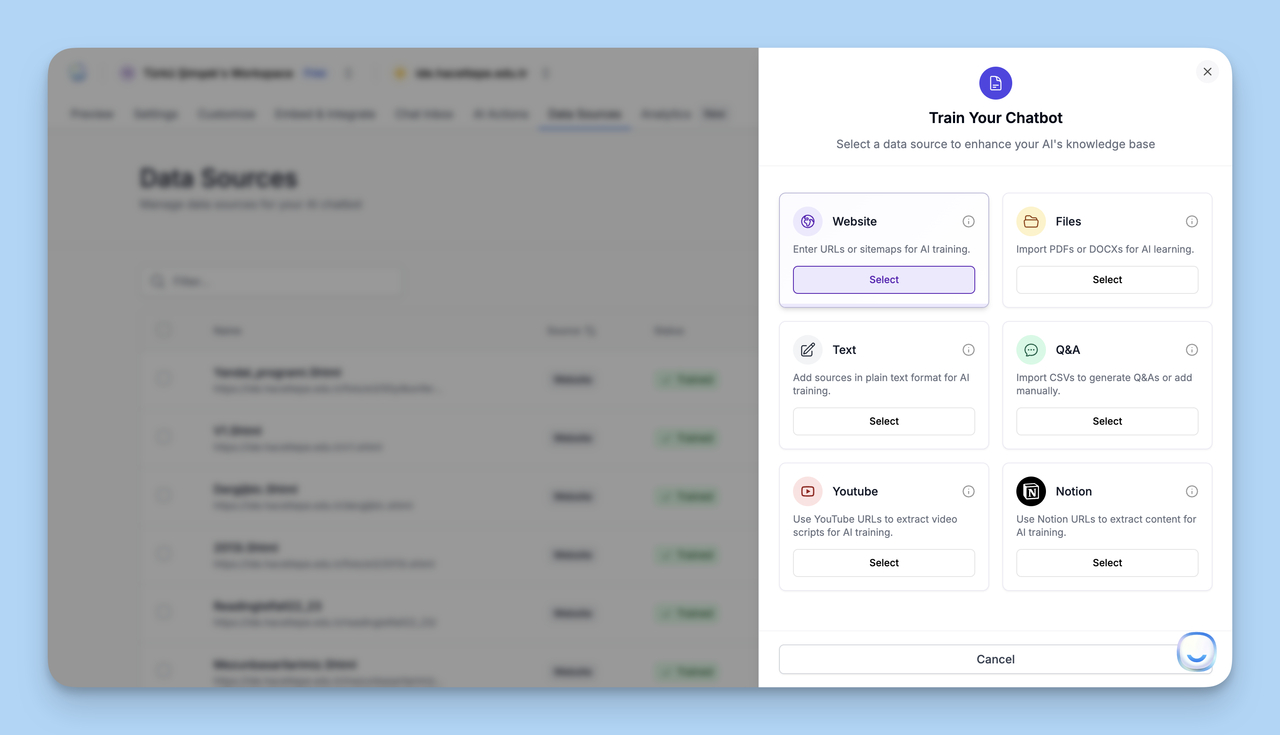

This is where the agent's intelligence comes from. Without grounded training data, an LLM is a confident liar. With it, the same model becomes a competent support agent. Inside LiveChatAI you point the agent at your help center URL, sitemap, or upload PDFs of internal policies. It crawls, chunks, and embeds the content automatically.

The order of operations I recommend: ingest the public help center first, then internal SOPs, then anonymized resolved-ticket transcripts (the gold mine), then product release notes on a recurring weekly sync. Each layer fills a different gap. Help center gives the agent baseline knowledge. SOPs give it policy. Old tickets give it tone and edge cases. Release notes keep it current.

You'll know it's working when: you can ask the agent ten random questions from your last week of tickets and seven or eight come back with answers that cite the right doc. The remaining two or three should clearly say "I don't know" rather than improvising.

Watch out for: conflicting documentation. If your help center says "30-day refund window" and your SOP says "14 days for digital products," the agent will pick one based on retrieval ranking — usually the wrong one. Audit and reconcile before training.

Pro tip: anonymize ticket data before ingesting. Names, emails, order numbers all need to come out. I use a regex sweep plus a manual spot-check on 50 random rows before any ticket batch goes into the agent.

Step 4: Configure Integrations and Customize Voice

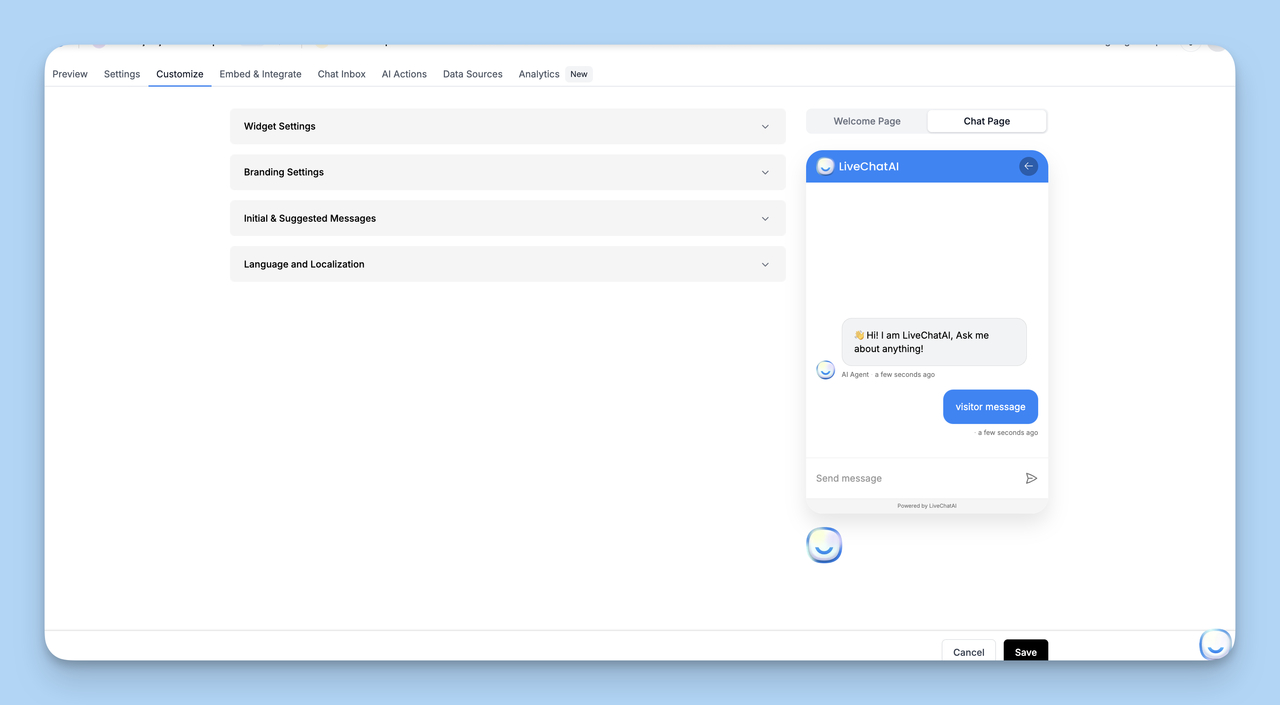

Two parallel tracks here. On the cosmetic side, set your widget colors, logo, welcome message, and the agent's name. On the substantive side, define the agent's voice in the system prompt: how formal, how chatty, what it should never say, when to apologize, how to handle frustrated customers. This part feels soft but shapes every conversation.

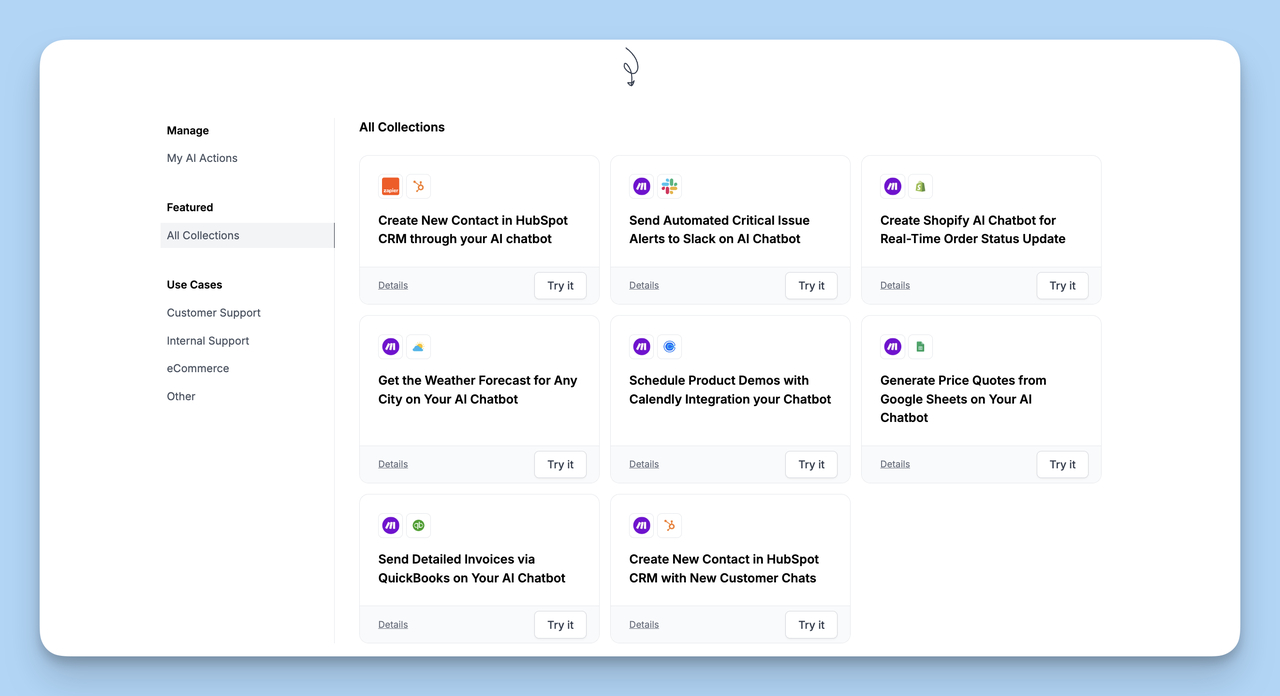

For integrations, decide which actions the agent can take. Common ones: look up an order in Shopify, fetch a customer record from HubSpot CRM, update a ticket in your helpdesk, escalate to a Slack channel, send a transactional email. In LiveChatAI, AI Actions handle this through pre-built templates, OpenAPI definitions, or webhooks for custom endpoints. OpenAI's function-calling guide covers the underlying pattern if you're curious about what's happening under the hood.

You'll know it's working when: the agent answers a question about a real order ("where's my package?") by actually looking up the order in your system and quoting the tracking number, not by sending the customer a generic shipping policy link.

Watch out for: giving the agent write access too early. Read-only integrations first, write actions only after you trust the agent's reasoning on read-only conversations. A bad refund issued at scale is a finance problem, not a support problem.

Pro tip: write the system prompt in plain English, not bullet-point spec language. The model reads it the way a new hire reads an onboarding doc — clearer prose makes for clearer behavior.

Step 5: Test Edge Cases and Multilingual Flows

Build a test suite of 50-100 real conversations. Half from your easiest tickets, half from the messiest. Run them through the agent and grade each one: correct, correct-but-awkward, incorrect, escalated-correctly, escalated-when-it-shouldn't-have, didn't-escalate-when-it-should-have. The last two failure modes matter most.

For multilingual, test in every language you support before you turn it on for that locale. Modern LLMs handle most major languages well, but tone and idiom drift. A test conversation in Spanish about a billing dispute can read fine to a Spanish speaker and feel oddly formal to a Mexican customer specifically. Have a native speaker review the agent's outputs in each language before launch.

You'll know it's working when: 90%+ of your test suite passes, and the failure cases are all clean escalations rather than wrong answers.

Watch out for: over-fitting to your test suite. If you tune the prompts and retrieval until your 100 test cases pass, you may have made the agent worse on the long tail. Hold out 20 cases as a never-tuned validation set.

Pro tip: for adversarial testing, ask the agent to do something it shouldn't — issue a refund without verifying the order, or share another customer's data. A well-configured agent refuses politely. A poorly configured one obliges. Better to find out in test than in production.

Step 6: Launch a Pilot, Measure, and Iterate

Don't go from zero to all traffic. Pilot on a single channel or a single intent first. The most common pattern: start the agent on the website chat widget but only for visitors who land on help center pages. That gets you signal without putting the agent in front of every customer on day one.

Run the pilot for two to four weeks. The metrics that matter: deflection rate (resolved without human), escalation accuracy (handed off when it should have), CSAT on agent-only conversations, and time-to-first-response. Compare to your human-only baseline from the same period last year.

You'll know it's working when: deflection sits above 50%, CSAT on agent-resolved conversations is within five points of human-resolved, and your team is reporting more capacity for complex tickets rather than firefighting AI mistakes.

Watch out for: celebrating deflection rate alone. A high deflection rate with low CSAT means the agent is closing tickets the customer didn't actually want closed. Both numbers matter together.

Pro tip: weekly conversation review for the first month. Pull 20 random transcripts, read them end-to-end, and write down every moment that made you wince. Those moments become next week's prompt updates and retraining priorities. Our piece on chatbot implementation has more on the rollout cadence.

Best Tools and Platforms for Building AI Support Agents

The platform market split in two over the last 18 months. On one side, no-code builders aimed at support teams. On the other, developer frameworks for teams that want full control. Here's how I'd map the market today, with the caveat that I work at LiveChatAI and have an obvious bias toward our product.

LiveChatAI is what I know best. It's a no-code AI agent builder that ingests your help center, supports AI Actions for tool-calling, runs in 95+ languages, and ships a free forever plan that's enough to get a working pilot off the ground. Best fit: B2B SaaS and ecommerce teams who want a working agent in a week without a dev team. The free plan is a fair way to test it on real tickets before any commitment — start at the AI agent for customer support page.

HubSpot Service Hub works well if you're already running HubSpot CRM. The native AI features stay tightly coupled to your contact records, deal pipelines, and helpdesk tickets, which removes a lot of the integration plumbing. Pricing climbs fast at the upper tiers, so it's mostly a fit for teams already on a HubSpot Pro plan or above. Their Service Hub product page has the current feature breakdown.

Salesforce Einstein is the enterprise option. If you live in Service Cloud, Einstein's AI agents reuse your existing case data, knowledge articles, and Flow automations. Implementation is heavier — usually a partner-led engagement — but the depth of CRM integration is unmatched at the enterprise tier. Salesforce Agentforce is the current entry point.

LangChain or LlamaIndex if you're a developer who wants full control. These are open-source frameworks for building retrieval-augmented agents from scratch. You're writing Python and operating your own vector database, but you get to design every part of the reasoning loop. Best for teams with at least one ML-literate engineer who can own the codebase. The LangChain agents docs are the right starting point.

Build-your-own RAG with the OpenAI Assistants API. A middle path: use OpenAI's Assistants API for the model and reasoning, plug in your own retrieval layer, and skip both the no-code abstraction and the LangChain learning curve. Faster than from-scratch, more flexible than no-code, but you're still writing application code. OpenAI's Assistants API documentation walks through the setup.

Pick on fit, not features. The cheapest tool you can ship with is better than the most powerful tool you can't.

Integrating AI Agents with Your Existing Systems

The agent isn't useful in isolation. Where it earns its keep is by reading from and writing to the systems your support team already uses. Three integration layers usually need to be in place: the CRM, the helpdesk, and the knowledge base. A fourth layer — channel deployment — decides where customers actually meet the agent.

CRM sync means the agent can fetch customer context before answering. Plan, lifetime value, recent purchases, open issues. The difference between a generic answer and a personalized one is usually a single CRM lookup. In HubSpot or Salesforce, this is a contact-record query. In a custom CRM, it's a REST API call exposed through the agent's actions config.

Helpdesk routing determines what happens when the agent escalates. The pattern I recommend: the agent owns the conversation until it hits a confidence threshold or a forced-escalation intent, at which point it creates a ticket in your helpdesk with the full conversation transcript and a brief summary. The human picks up where the agent left off, with full context, instead of asking the customer to repeat themselves.

Knowledge base ingestion needs to be a recurring job, not a one-time setup. Help center articles change. Pricing changes. Product features ship. Schedule a weekly re-crawl at minimum, daily if your docs change frequently. Stale agents tell customers about features that no longer exist, which is worse than no agent at all.

Channel deployment is the last mile. Decide which channels the agent runs on: website chat widget, WhatsApp, Messenger, email auto-reply, in-app chat, voice. Each channel has its own latency, formatting, and escalation needs. Start with website chat — it's the easiest to instrument and the easiest to roll back if the launch goes sideways. Add channels one at a time. Our guide on AI chatbots and human agents has more on the handoff design.

Best Practices for Training and Optimizing AI Agents

The agents that work in production share a small set of habits. The ones that fail share a smaller set of mistakes. After watching dozens of LiveChatAI deployments, here's what separates them.

1. Ground the agent in real internal documentation. The agent should never answer from the model's general knowledge. Every answer should retrieve from your help center, SOPs, or policy docs. If the retrieval returns nothing relevant, the agent should say so and escalate. This single rule prevents most hallucinations.

2. Write a tight system prompt and update it weekly. The system prompt sets the agent's persona, voice, escalation triggers, and forbidden actions. Treat it like product copy: short, specific, edited often. I've seen teams reduce hallucinations 40-50% in the first month just by tightening their system prompt based on real conversation reviews.

3. Build a confidence threshold and respect it. Most platforms expose a confidence score per answer. Below a threshold, the agent should escalate or hedge ("I'm not sure — let me get a human"). Above the threshold, it should answer directly. Tune the threshold against your CSAT data. Too low and the agent guesses. Too high and it escalates everything.

4. Review conversations weekly, not monthly. Pull 20-30 random transcripts each week. Mark every moment that's wrong, awkward, or off-tone. Those notes become the next round of system-prompt edits and retraining priorities. Monthly reviews are too slow — the agent will entrench bad patterns before you catch them.

5. Retrain on resolved tickets, not chat logs alone. Resolved tickets contain the answer humans actually accepted. Chat logs contain everything that was said, including the wrong answers. Feed the resolved-ticket subset into the agent's training corpus. Skip the unresolved or escalated threads unless you've curated them.

6. Test prompt changes against a holdout set. Before pushing a prompt update, run it against 50 frozen test cases. If accuracy or CSAT drops on the holdout, roll back. This is the equivalent of regression testing for code, and it's what stops "small prompt tweaks" from quietly degrading the agent.

One honest caveat: even the best-trained agents hallucinate sometimes. The goal isn't zero hallucinations. It's zero confident hallucinations. An agent that says "I don't know — let me connect you with a human" is doing its job, even when it can't answer.

Measuring Success and ROI of AI Customer Support

If you can't measure it, you can't defend the budget. Five metrics carry most of the weight when reporting AI agent performance to leadership.

Deflection rate. Percentage of conversations resolved without a human touch. Healthy targets are 50-70% within the first 90 days, climbing toward 70-80% by month six as the agent learns your edge cases. Anything below 30% means your training data is too thin or your intent map is wrong.

CSAT on agent-resolved conversations. Send the same survey you send for human-resolved conversations. The number should land within 5-10 points of your human baseline. If it's lower than that, customers are accepting the agent's answer but resenting the experience, and that resentment shows up as churn six months later.

Time-to-resolution. Measure from first message to ticket close. Agents collapse this dramatically — the median goes from hours to seconds for routine tickets. Track the distribution, not just the average, because outliers tell you which intents are still hard.

Cost per ticket. Loaded human cost per ticket vs agent cost per ticket. The agent number includes platform fees, model costs, and the time your team spends maintaining the agent. Isometrik's deployment data puts the savings around 30% in the first year, which matches what I see in mid-market teams.

Lift in human agent productivity. The point of the agent isn't to replace humans — it's to free them for the work that needs judgment. Measure tickets per human agent per week before and after launch. A 20-30% lift is realistic. If you're seeing 0%, the agent isn't deflecting the right intents.

Report these monthly. Stack them in a single dashboard. The minute you can't show all five, leadership stops trusting any of them.

Common Challenges and Solutions While Creating AI Agent for Customer Support

Five problems show up in almost every AI agent rollout. None are fatal. All are solvable if you know to expect them.

Challenge: hallucinations. The agent invents an answer that sounds right and isn't. Solution: enforce retrieval-grounded answers with a confidence threshold, require the agent to cite the source doc inline, and escalate any answer below the threshold. The Isometrik teams running tight retrieval policies report hallucination rates under 2%.

Challenge: customer trust. Some customers don't want to talk to AI at all, especially in regulated industries. Solution: tell them upfront that they're chatting with an AI agent and offer a one-tap human handoff at any point. Hidden AI is the fastest way to lose trust. Transparent AI with a clear escalation button performs nearly as well as undisclosed AI on CSAT.

Challenge: stale knowledge. The agent answers from outdated docs because the help center hasn't been re-crawled since launch. Solution: schedule weekly automatic re-crawls, set up alerts when key pages change, and assign one human owner of the agent's knowledge base. Without an owner, knowledge rot is inevitable.

Challenge: integration breakage. An API the agent depends on changes its schema, and now order lookups silently fail. Solution: instrument every action with success/failure logging, alert on action error rates above a threshold, and treat the agent's actions as production code with the same monitoring you'd give any service.

Challenge: edge-case escalations that drop context. The agent escalates but the human picks up cold, asking the customer to repeat the issue. Solution: every escalation should carry a transcript and a one-paragraph summary into the helpdesk ticket. This is a configuration decision, not a technical limit, and it's the single biggest predictor of post-handoff CSAT.

Pilot Your AI Support Agent in 30 Days

You don't need a six-month roadmap to ship a useful AI agent for customer support. You need a tight intent map, a no-code platform with real retrieval, your help center cleaned up enough to ingest, and a 30-day pilot on a single channel. That's the minimum, and it's also enough to see the deflection numbers that justify the rest.

The teams that win this year are the ones who start narrow and instrument well. Pick three intents. Train on real docs. Pilot on one channel. Measure deflection and CSAT side by side. Iterate weekly. The full rollout follows naturally once the first slice is proving itself.

If you want to skip the platform-comparison phase, LiveChatAI's free plan is built for exactly this kind of pilot — ingest your help center, ship an agent on your website, and read the deflection numbers in a week. Whatever platform you choose, the framework above is what makes the pilot work.

Frequently Asked Questions

How do I create an AI agent for customer support for free?

You can build a working AI support agent on a free plan from no-code platforms like LiveChatAI, which includes core agent features, knowledge base ingestion, and a website widget at no cost. For developer builds, the open-source LangChain RAG tutorial gets you to a functional retrieval agent in a few hours, though you'll pay for the underlying LLM API calls. Free plans are real options for pilots and small teams; you usually outgrow them once volume crosses a few hundred conversations a month, at which point a paid tier still costs less than one human agent.

How do I create an AI agent for customer support on GitHub?

Several open-source repos give you a starting point. The LangChain GitHub repo is the most active framework and includes example agents specifically for customer support. LlamaIndex focuses on the retrieval side. Clone the repo, point it at your docs, configure an LLM API key, and you have a baseline agent in an afternoon. The honest tradeoff: GitHub-based agents need engineering ownership for hosting, monitoring, and updates. No-code platforms hide all that complexity but cost money. Pick based on whether you have an engineer who wants to own the system or a budget that can replace one.

What is the best AI agent for customer service?

The best AI agent depends on your team size, existing stack, and how much engineering capacity you have. For small to mid-sized B2B SaaS teams without a dedicated AI engineer, no-code platforms like LiveChatAI ship the fastest. For teams already on HubSpot, the native HubSpot Service Hub AI keeps everything in one place. For enterprise-scale Service Cloud users, Salesforce Einstein wins on CRM depth. For teams with engineering capacity who want full control, LangChain plus the OpenAI Assistants API is the most flexible. There's no universal best — the best is the one your team can actually launch and maintain.

How does AI improve customer support efficiency?

AI agents resolve routine tickets in seconds instead of minutes, which collapses the median time-to-resolution and frees your human agents for complex work. The two efficiency gains compound: cost per ticket drops because the agent runs without a salary, and human agent productivity rises because they're handling the tickets that actually need judgment instead of the routine ones. Iris Agent's deployment data indicates AI deflects 60%+ of tickets and trims operating costs 30-60% across the teams it tracks. The other efficiency lift is 24/7 coverage — the agent doesn't sleep, so customers in different time zones get the same fast first response.

How long does it take to build an AI customer support agent?

Two to four weeks for a no-code build with a clean help center. Add another two weeks if you're cleaning up documentation along the way. Custom builds on LangChain or similar frameworks usually take two to three months for a production-ready agent, including the testing and monitoring you actually need before going live. The biggest variable isn't the platform — it's the state of your existing documentation. Teams with mature help centers ship faster because the agent has good training data on day one. Teams with thin docs spend most of their build time writing what the agent needs.

Articles you might like:

• AI Agent vs Chatbot: Evaluation, Differences, Use Cases

• The AI Revolution in Customer Support: 2025 Statistics

• How to Implement a Chatbot without Coding - The Essentials