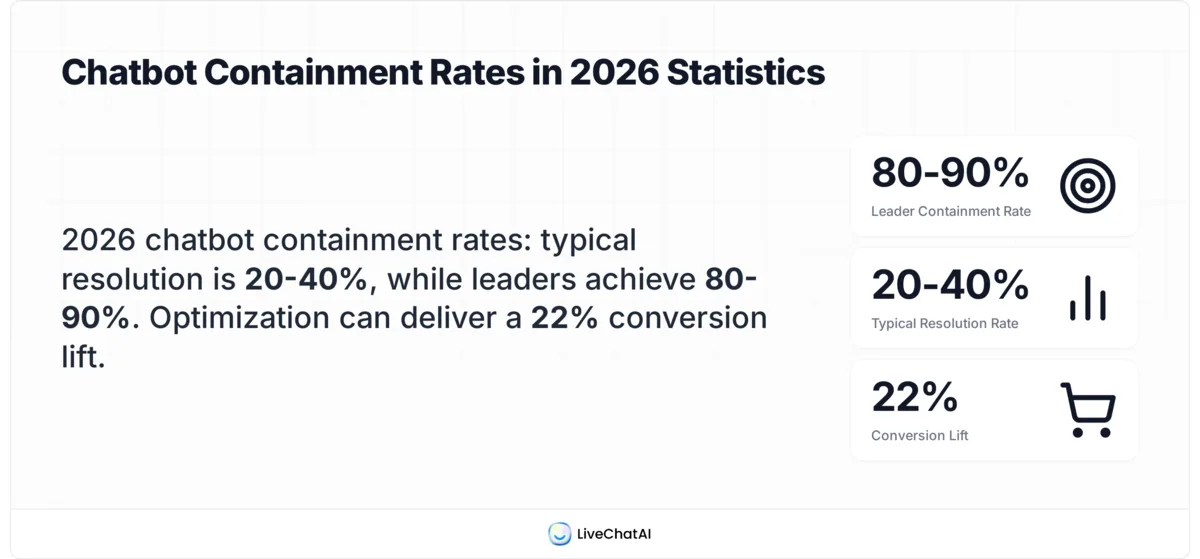

Chatbot containment rate is the percentage of customer conversations a chatbot resolves end-to-end without escalating to a human agent. It's calculated as (Total Conversations − Escalated Conversations) ÷ Total Conversations × 100. Most bots sit between 20-40%, while top performers hit 80-90%. Pair it with CSAT or you're measuring noise.

What is chatbot containment rate?

Chatbot containment rate measures how much of your support volume an AI bot handles entirely on its own. If 1,000 people start a conversation with your bot and 750 of them close the chat without ever asking for a human, your containment rate is 75%.

I've tracked containment metrics across dozens of LiveChatAI customer rollouts, and the number gets misread constantly. People treat it like a report card grade. It isn't. It's an automation throughput metric, not a satisfaction metric. According to ASAPP, containment rate is not a good measure of success when used alone. A bot can "contain" a customer who gave up, lied about being helped, or rage-quit the widget. All three count as wins on the dashboard.

So why does it matter? Because containment rate is the cleanest proxy you have for cost-to-serve. Every contained conversation is one your support team didn't pay an agent to handle. Each percentage point of containment translates directly to ticket deflection, lower average handle time across the team, and faster first response on the conversations that actually need a person. The trick is reading containment alongside CSAT, escalation reasons, and reopen rate so you don't optimize a vanity number.

If you're new to support analytics, our customer support statistics roundup gives you the wider context for where containment fits in the broader stack of KPIs.

How to calculate chatbot containment rate

The formula is short. The judgment call is in deciding what counts as an escalation.

The standard formula:

Containment Rate = (Total Conversations − Escalated Conversations) ÷ Total Conversations × 100

Worked example. Your bot handles 1,000 conversations in a week. 250 of those conversations get handed off to a human agent (either the bot couldn't answer, the user typed "agent," or a routing rule triggered). 1,000 − 250 = 750. 750 ÷ 1,000 × 100 = 75% containment.

What you have to decide before you trust the number:

• How you count abandoned chats: A user opens the bot, asks one question, doesn't reply to the bot's answer, and closes the tab. Counted as contained? Most analytics tools say yes by default. That inflates the number. We recommend excluding sessions with under 30 seconds of dwell time, or treating "no reply within 2 minutes after the bot's answer" as inconclusive rather than contained.

• What "escalation" actually means: A live handoff is obvious. A "request callback" form, a redirect to email support, or a contact-us link click are escalations too. Quickchat AI notes that if your bot has a containment rate of 70%, that means 7 out of 10 conversations are fully automated — but only if you've defined what fully automated means in your tool.

• The window: Daily containment swings wildly. Use rolling 7-day or 30-day windows to smooth out the noise from product launches, outages, or marketing pushes that change query mix.

The number itself takes 30 seconds to calculate. The plumbing under the number, intent tagging, escalation logging, session boundaries, takes a few weeks of cleanup before you can trust it.

What's a good chatbot containment rate in 2026?

The honest answer is "it depends on what you're containing." A simple FAQ bot for a SaaS pricing page should be hitting 85% or it's broken. A bot fielding billing disputes for an enterprise B2B platform might top out at 35% and still be a huge win.

Here's where the industry actually sits in 2026. According to Alhena AI, most chatbots resolve 20-40% of conversations without agents, while leaders hit 80-90%. That's a gigantic spread, and the difference between the bottom and top of that range is rarely the underlying tech. It's how the bot was trained, what intents it covers, and how aggressively the team prunes failed conversations every week.

Industry-by-industry, here's what we see in our customer audits:

• E-commerce and SaaS self-serve: 60-80% containment is reachable. Order status, refund eligibility, password resets, plan questions. Predictable intents, structured backend data, low compliance risk.

• B2B SaaS support: 40-60%. Account-specific debugging and integration questions need context the bot doesn't always have, but FAQ-shaped questions still contain well.

• Financial services and insurance: 25-45%. Compliance, identity verification, and dispute handling pull a lot of conversations to humans by policy, not by bot failure.

• Healthcare: 20-40%. Triage and appointment scheduling contain well. Anything clinical escalates by default and should.

The harder benchmark is the one most teams ignore. Conferbot reports that 68% of chatbots underperform because their owners never optimize them after launch. Your starting containment rate isn't your real number. It's the number you get after three months of weekly review of failed conversations, which is where most teams quit. For broader market context, our AI adoption benchmarks piece breaks down how support orgs are actually deploying these tools.

Containment rate vs deflection rate vs resolution rate

These three terms get used interchangeably in vendor marketing, and they shouldn't be. They measure different things, and confusing them leads to dashboards that don't reconcile.

Containment rate is conversation-level. It asks: of all the chats that started with the bot, how many ended without a human? It says nothing about whether the customer's problem got solved.

Deflection rate is ticket-level. It asks: how many support tickets did we avoid because the customer used self-service or the bot instead? Deflection assumes a baseline of "what would have been a ticket." It's harder to measure cleanly because it requires you to model what would've happened without the bot. Calabrio defines a chatbot or contact center containment rate as the percentage of total chatbot interactions that are successfully contained, while deflection sits one layer up at the support-channel level.

Resolution rate is the only one that measures whether the customer actually got their problem solved. It asks: of the conversations the bot handled, how many ended with the user's issue resolved? You measure resolution rate with a post-chat "did this solve your problem" prompt, a CSAT survey, or by tracking whether the same user opens another chat about the same topic within 7 days.

The relationship: a chat can be contained without being resolved. A chat can be resolved without deflecting a ticket (the user wasn't going to file one anyway). A bot can have 90% containment, 60% resolution, and 35% deflection all at the same time. That's why dashboards need all three.

If you only have time to add one alongside containment, add resolution rate. It's the one that catches the failure mode where customers give up and your containment number lies to you about it.

Factors that affect chatbot containment rate

From the rollouts I've watched, containment rate moves on these levers more than anything else:

• Bot training quality: The single biggest factor. A bot trained on 50 well-tagged FAQ pairs out-performs a bot trained on 500 raw scraped help articles. Quality of intent labels and quality of response matter more than corpus size.

• Intent coverage: Containment cratered for one customer the day they launched a new feature, because no one updated the bot's knowledge base. The bot didn't know the feature existed and escalated 100% of those conversations. Coverage gaps are the most common containment killer in the first 6 months post-launch.

• Fallback strategy: What happens when the bot doesn't know? "I don't understand, please rephrase" tanks containment because users immediately ask for an agent. A fallback that offers 3 likely topics or routes to a knowledge-base search recovers 20-30% of those would-be escalations.

• Escalation triggers: Some teams set the bot to hand off after 2 unrecognized intents. Others wait for 4 or for the user to type "agent." Tighter triggers protect CSAT but lower containment. Looser triggers boost containment but frustrate the people who needed help 3 messages ago.

• Channel: A bot on a help-center page (where users came looking for self-service) contains better than a bot on a checkout page (where users want to buy and have a blocking question). Same bot, different intent mix, different ceiling.

• Audience type: Existing customers asking account-specific questions contain worse than prospects asking pricing questions. Self-serve audiences with technical literacy contain better than first-time users.

• Industry complexity: Already covered above. Highly regulated industries have lower realistic ceilings. That's not a bot problem, it's a policy floor.

The lever most teams ignore is the third one. Fallback design is invisible until you read transcripts, and most teams never read transcripts. Disciplined chatbot QA on a weekly cadence is what separates the 35% bots from the 75% bots.

7 strategies to improve chatbot containment rate

None of these are exotic. The reason most bots stall at 30-40% is that teams skip the boring weekly review work, not that they need a smarter model.

Expand intent training data

Pull the last 30 days of unrecognized queries, cluster them by topic, and add the top 10 clusters to your training set. Most teams add intents reactively, one at a time, after a customer complaint. Doing it in batches every two weeks lifts containment by 8-12 percentage points in the first quarter for bots that started below 50%.

Improve fallback responses

Rewrite "I didn't understand that" to "I'm not sure I have an answer for that. Were you asking about [topic A], [topic B], or [topic C]?" Pull the three options from the closest matching intents. This single change recovers 15-25% of conversations that would've escalated, with no model changes needed.

Add suggested replies

Show 3-4 quick-reply buttons after the bot's first message based on the page the user is on. A pricing page bot opens with "View plans," "Talk to sales," "Free trial details." This funnels conversations into intents you've already trained for and lifts containment 10-20% on entry-point bots.

Build a knowledge base

If your bot pulls answers from a help center, the help center itself is the lever. Audit the top 20 escalated topics, write or update the underlying article, and re-index. Containment follows article quality. A thin knowledge base produces a thin bot, regardless of model.

Track failed conversations

Tag every escalated conversation with the reason: missing intent, wrong answer, user typed "agent," compliance trigger. Without this tagging, you're optimizing blind. With it, you can see which 20% of failure modes account for 80% of escalations and fix those first.

A/B test conversation flows

Try two greeting variants for two weeks each. Try a version with quick-reply buttons against a free-text-only version. Test your fallback wording. Containment rate is sensitive to flow design, and most teams ship one version and never iterate. The biggest wins we've seen came from a 3-message sequence change, not a model upgrade.

Pair AI with rule-based fallbacks

For your top 10 most common intents, write rule-based responses as a safety net. If the AI confidence drops below a threshold on a known-common intent, fall back to the rule. This catches the cases where the AI gets cute and gives a wrong answer, and it gives you guaranteed performance on the queries that drive most of your volume. Pure-AI bots feel modern but are harder to debug.

Common containment rate mistakes

After watching teams chase containment numbers without watching CSAT, the same handful of mistakes show up again and again.

Chasing the number itself. The fastest way to push containment up is to make escalation harder. Hide the "talk to a human" button. Loop users through clarifying questions. Containment goes up, CSAT collapses, and reopen rate spikes 2-3 weeks later when the same customers come back angrier. Sam Talasila on LinkedIn put it bluntly: his client's chatbot had a 75 percent containment rate, and customers still hated it.

Ignoring CSAT. Containment without CSAT alongside it is meaningless. The only valid containment number is one read together with the satisfaction score for contained conversations. If contained CSAT is below 4.0/5.0, your bot is making customers worse off than transferring them would.

No escalation path. Some teams remove the human handoff entirely to force containment to 100%. This works for FAQ bots on low-stakes pages and nowhere else. Customers with real problems don't go away, they go to email, social media, or your competitors.

Single-metric optimization. Containment is one number on a four-number dashboard. The other three: resolution rate, CSAT, and escalation reason distribution. If you can't see all four, you can't tell whether containment going up is a good thing or a sign that something is breaking. VUX World defines the containment rate as the percentage of users who interact with an automated service and leave without speaking to a live human agent. That definition is neutral about whether they got help. So is the metric.

Track containment alongside CSAT this quarter

If your team is reviewing containment rate in isolation, change that next sprint. Add CSAT for contained conversations to the same dashboard, set a floor (most teams use 4.0/5.0), and treat any containment improvement that drops CSAT below the floor as a regression, not a win.

The bots I see consistently hit 70%+ containment without burning customers all do the same three things on repeat: weekly review of unrecognized intents, monthly audit of failed conversations, and a fallback strategy that recovers escalations instead of triggering them. None of it is exotic. All of it requires showing up every week.

Start by exporting last week's containment number alongside CSAT and resolution rate. If the gap between containment and resolution is wider than 15 percentage points, fix that gap before you push for a higher containment number. Your customers will thank you, and your support team will too. For a wider view of which support metrics actually predict retention, see our breakdown of customer success metrics and chatbot business ideas to expand bot use cases beyond support.

Frequently asked questions

Does a high containment rate mean the chatbot is more effective?

No. A high containment rate means the bot is handling more conversations without a human, but it doesn't tell you whether those customers got their problems solved. A bot can score 85% containment because it gives wrong answers fast or because users give up. Effectiveness is containment plus resolution rate plus CSAT. If all three move together, the bot is genuinely effective. If only containment moves, you're shipping a worse experience and calling it a win.

How does the chatbot containment rate affect the customer experience?

It cuts both ways. A well-trained bot with high containment gives customers instant answers and skips the queue, which they love. A poorly trained bot with high containment traps customers in failed loops and hides the "talk to human" button, which they hate. The deciding factor is how the containment was earned. If it came from better training and clearer fallbacks, customer experience improves. If it came from making escalation harder, it tanks.

How does the containment rate factor into the overall evaluation of a chatbot's performance?

It's one input out of four. The full picture pairs containment with customer satisfaction (CSAT), first contact resolution (FCR), and average handle time on the contained conversations. Containment alone tells you the bot is busy. CSAT tells you it's helpful. FCR tells you the help stuck. AHT tells you how efficient the bot is when it does help. Read all four together or you'll optimize for the wrong thing.

What's the difference between containment rate and self-service rate?

Containment rate is bot-specific: it measures conversations the bot finished without escalation. Self-service rate is broader and covers any channel where customers solve their own problem, including help center articles, video tutorials, in-product onboarding, and forum threads. A help center article that answers a question before the user opens the bot widget counts toward self-service but not toward containment. The two metrics often improve together because the same content investments lift both.

How often should I review my chatbot containment rate?

Track the number daily for trend visibility, review it formally weekly, and audit the underlying conversations monthly. Daily numbers swing too much to act on individually but catch outages or sudden drops fast. Weekly is the right cadence for spotting intent gaps and updating training data. Monthly conversation audits catch the slower-moving issues like fallback wording fatigue or new product features the bot doesn't know about. Anything less frequent than monthly and your containment number drifts from reality.

For further reading, you might be interested in the following:

Chatbot Quality Assurance (QA): The Fundamental Guide